Data matching, also known as record linkage or deduplication, is the process of identifying and linking related records across multiple datasets. It's the secret sauce that transforms raw, messy data into a valuable asset for marketing, sales, or data analysis.

Most available software tools are complex and costly. Datablist is an online application, compatible with Mac OS, Microsoft Windows, and Linux, to perform data matching fast. It implements exact match, phonetic, and advanced fuzzy matching algorithms.

In this guide, you will learn to demystify the complexities of data matching. Whether you're a novice looking to get started or an experienced data professional seeking to optimize your skills, this tutorial offers something for everyone.

Here is a quick summary of the Data Matching points you will find in this article:

- Loading your datasets

- Data Preparation with Cleaning and Normalization

- Match records within or across several data collections

- Deduplicate: remove or merge matching groups

Start using our online Data Matching tool in seconds. No sales calls or features on PowerPoints.

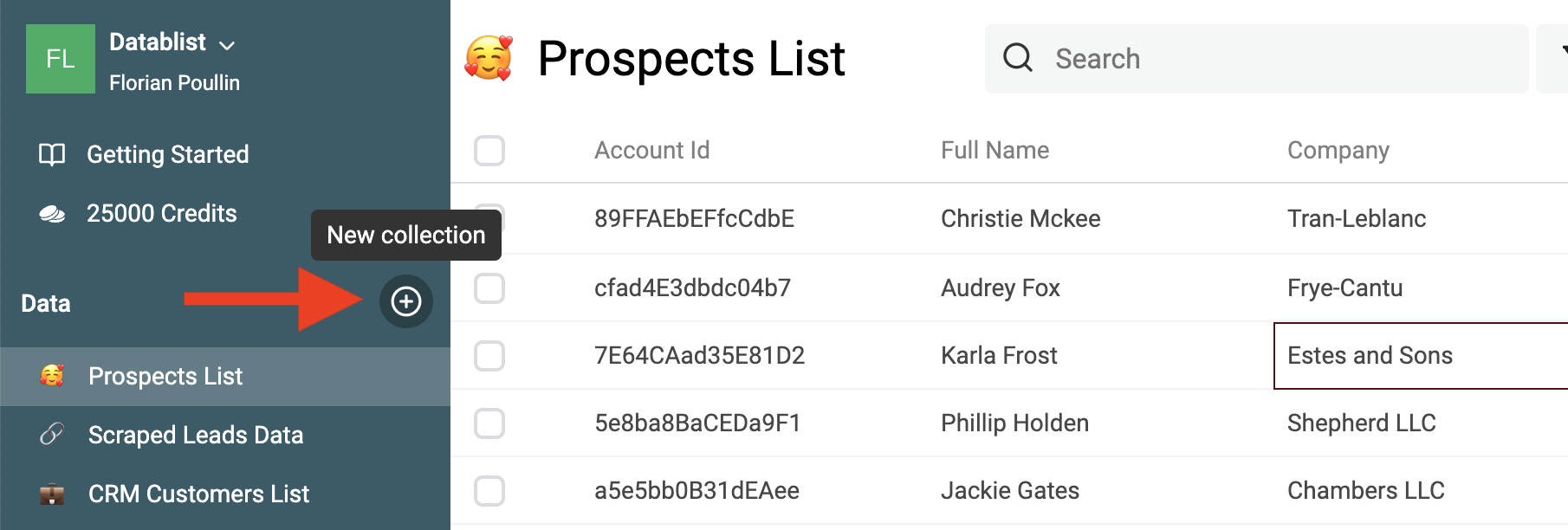

Step 1: Load your datasets

The first step is to load your datasets into Datablist. Datablist is an online tool for list management. You can view and edit CSV files and Excel files. It's the perfect tool to manage your leads list, clean customer data, or clean scraped data.

To start, create a collection to load your first dataset.

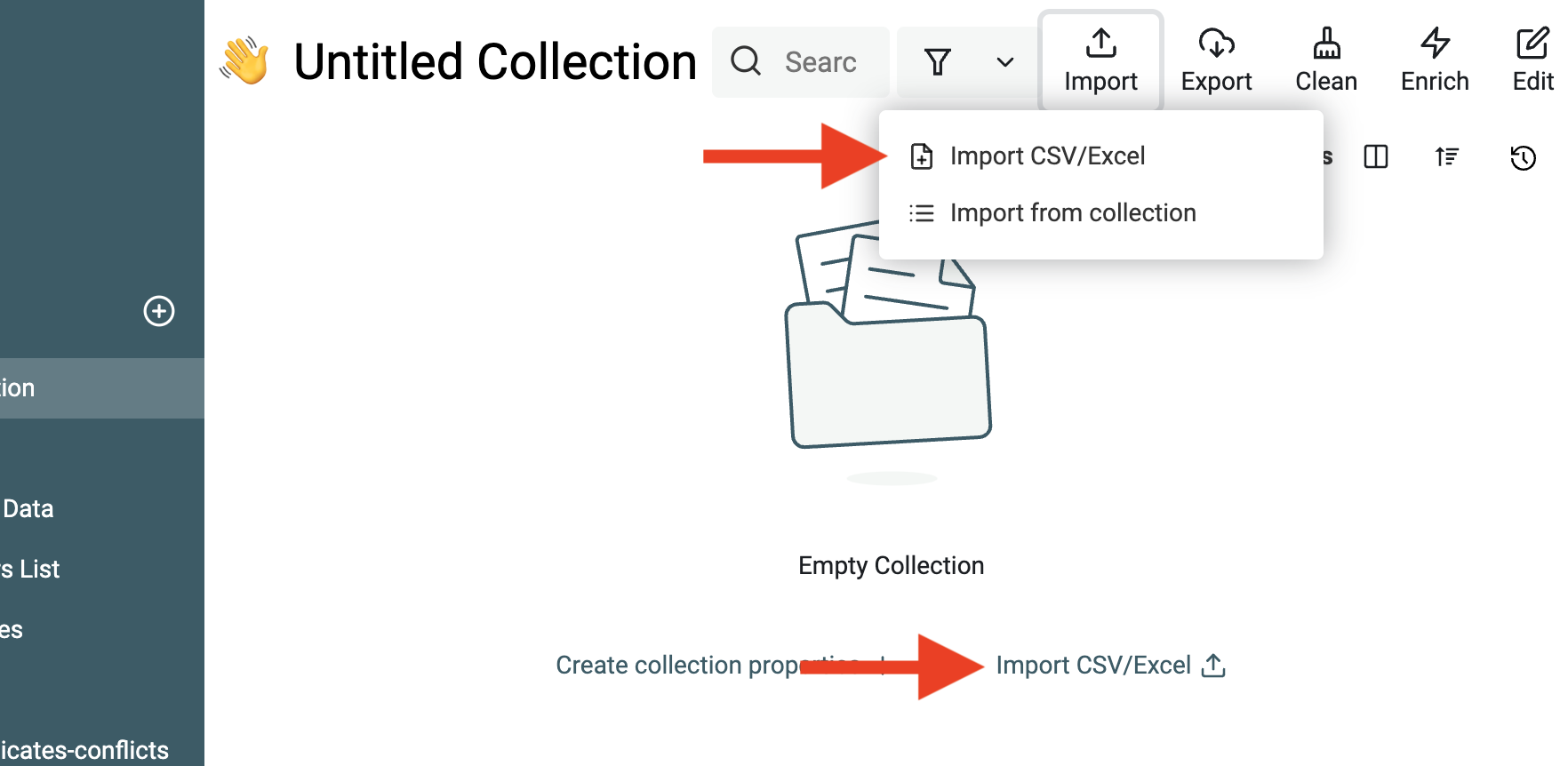

Then, click the Import button.

Once your first dataset is imported. You can either:

- Import other datasets with common data structures into the same collection.

- Import datasets with different data structures into new collections.

Datablist Duplicates Finder finds matching records within a single collection or across collections with different data structures.

Step 2: Clean your data if needed

The second step is Data Cleansing. Data cleaning is an essential prerequisite for data matching. It ensures the accuracy and reliability of the matching process. Dirty or unclean data can lead to incorrect matches and unreliable results. In essence, data cleaning paves the way for successful data matching.

When they appear in names (people names, company names), they add nothing but might prevent the deduplication algorithm from detecting duplicates.

Datablist comes with a list of built-in data-cleaning tools:

- Remove symbols and punctuation - Scraped texts might have smileys and ASCII symbols. Or names with punctuation. Datablist matching algorithm ignore them for data matching, but they will prevent automatic merging during the deduplication process.

- Remove extra spaces - An extra space between words is enough to make two strings different. Datablist matching algorithms pre-process text values to remove extra spaces, but they will also prevent automatic merging during the deduplication process.

- Extract email addresses, URLs, etc. from texts - If your data has unstructured text containing email addresses, URLs, member mentions, tags, etc. Use our Data Extractor to get those entities and structure your data. Your Data Matching process will be easier with structured entities to match.

- Remove HTML tags - Another cleaning feature is the ability to get plain text from strings with HTML tags. This lets you match scraped lists containing HTML tags with your other datasets.

- Convert text to DateTime, Number, Boolean, etc. - Datablist offers real data structure with built-in DateTime, Number, Boolean, etc. format. An important step for data cleansing is to convert raw text to a native format. Native Datetime, number, etc. are crucial for advanced merging rules when you need to select a master item based on value comparison (for example, the most recent date).

- Change text case to get consistent formatting - Text case transformation is a simple yet necessary step. Datablist has several built-in case transformation algorithms).

- Split or merge properties - This feature is perfect for dealing with multi-value data. If a property contains several email addresses separated with commas/semicolons/spaces, Datablist Split Property tool will create several properties with a single email address.

- Remove or replace empty values - Use Datablist Filtering features to filter empty values or empty rows.

Check our guide on data cleaning for more examples and instructions on data cleansing.

Normalize people names

Dealing with datasets of people is common in Data Deduplication. Customers, Leads, and prospects datasets are good examples of lists of people. In the best case, data matching on people datasets relies on unique identifiers such as email addresses or identity numbers. Without such identifiers, or to match people across datasets, you will need to use people's names for the data-matching process.

Pre-processing people's names ensures that all names follow a uniform format, reducing errors during deduplication.

Remove noises from names

People's names can be highly variable. Nicknames, abbreviations, alternate spellings, and use of special characters are common variations.

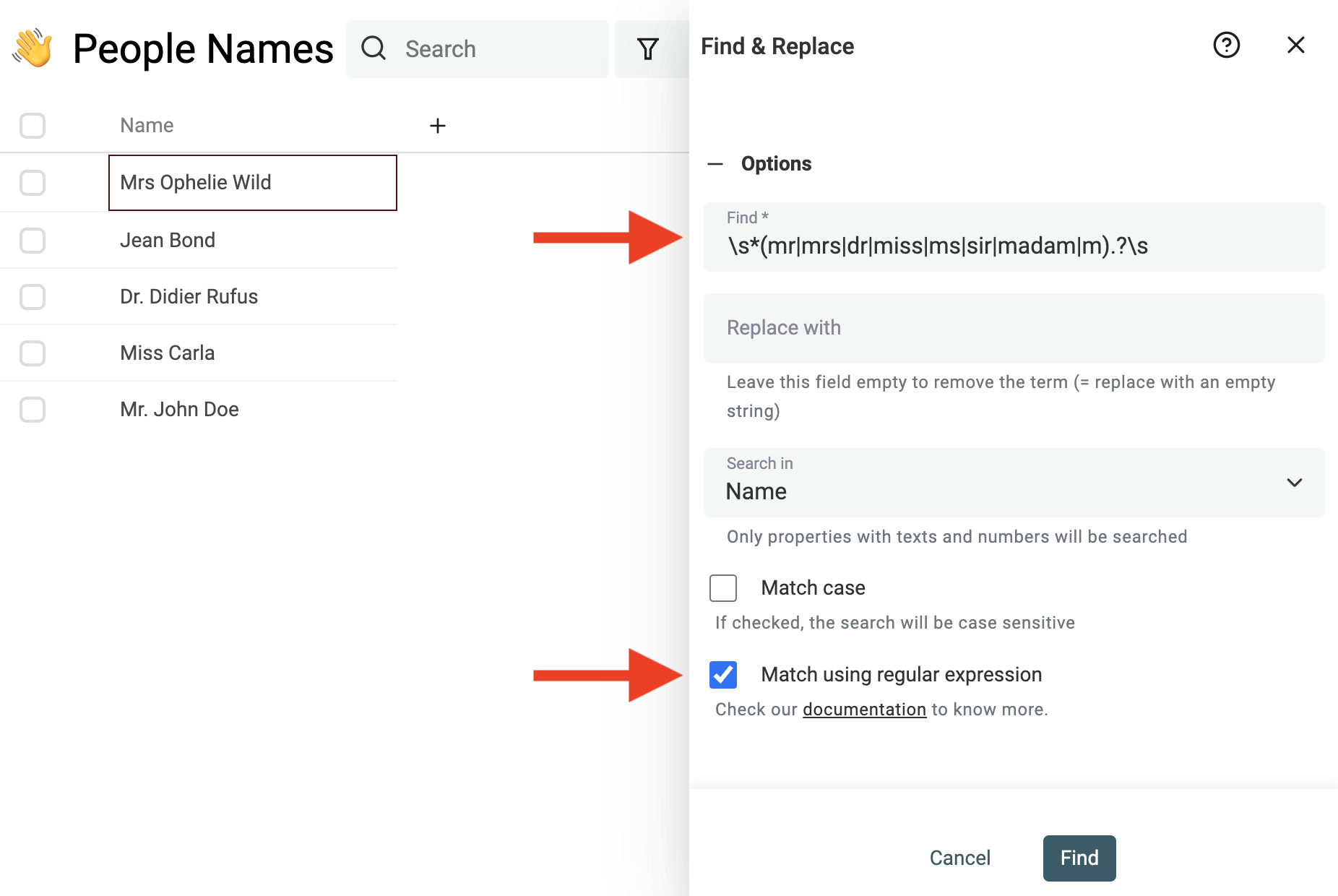

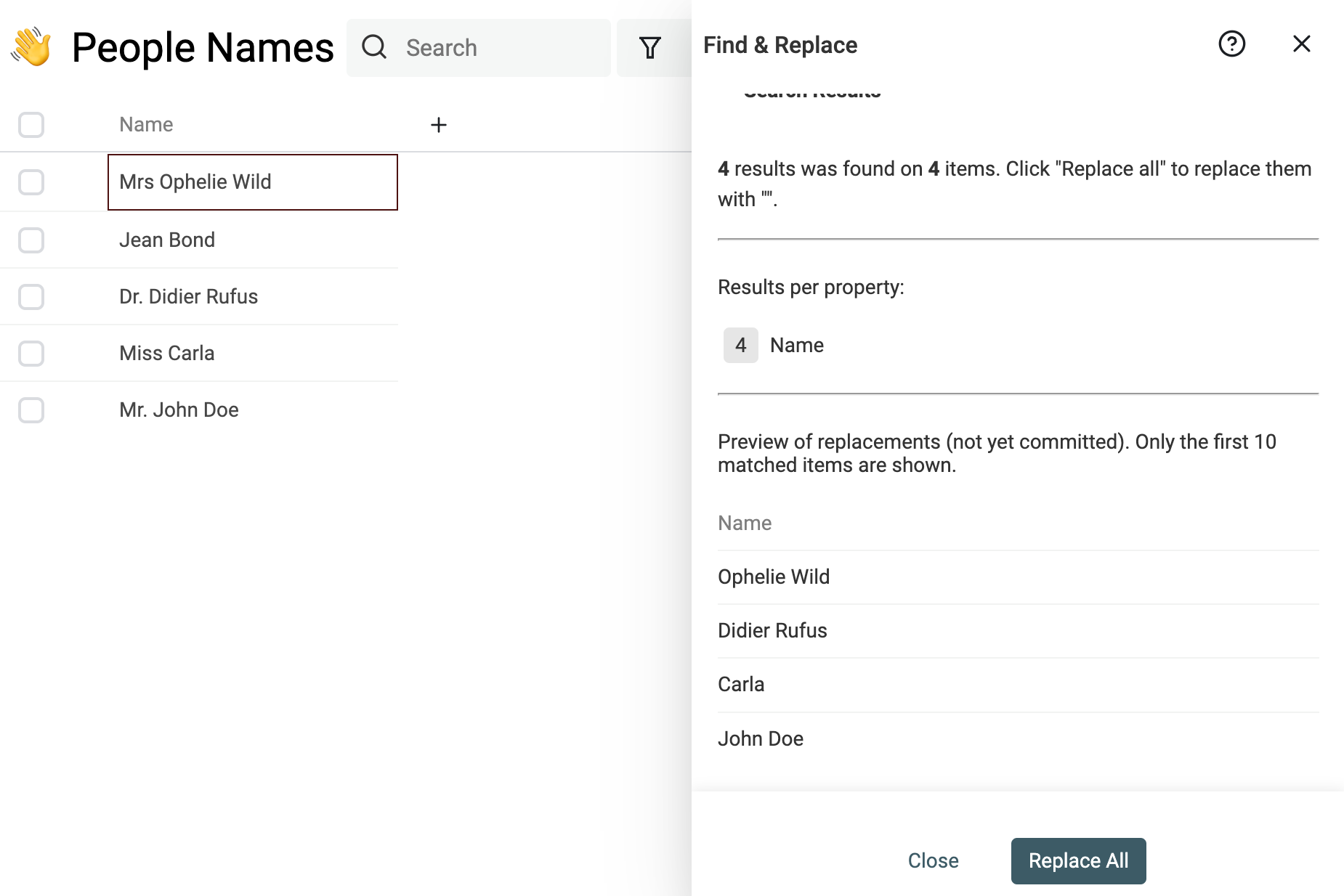

Use the powerful Find & Replace tool to remove prefixes, suffixes, stop-words, regional addendums, and other useless words.

For example, to remove name titles, you can use this regular expression:

^\s*(mr|mrs|dr|miss|ms|sir|madam|m).?\s

And replace it with an empty string.

Notes If you are not familiar with Regular Expressions, just contact us and we'll help you clean your data.

Parse full name into parts

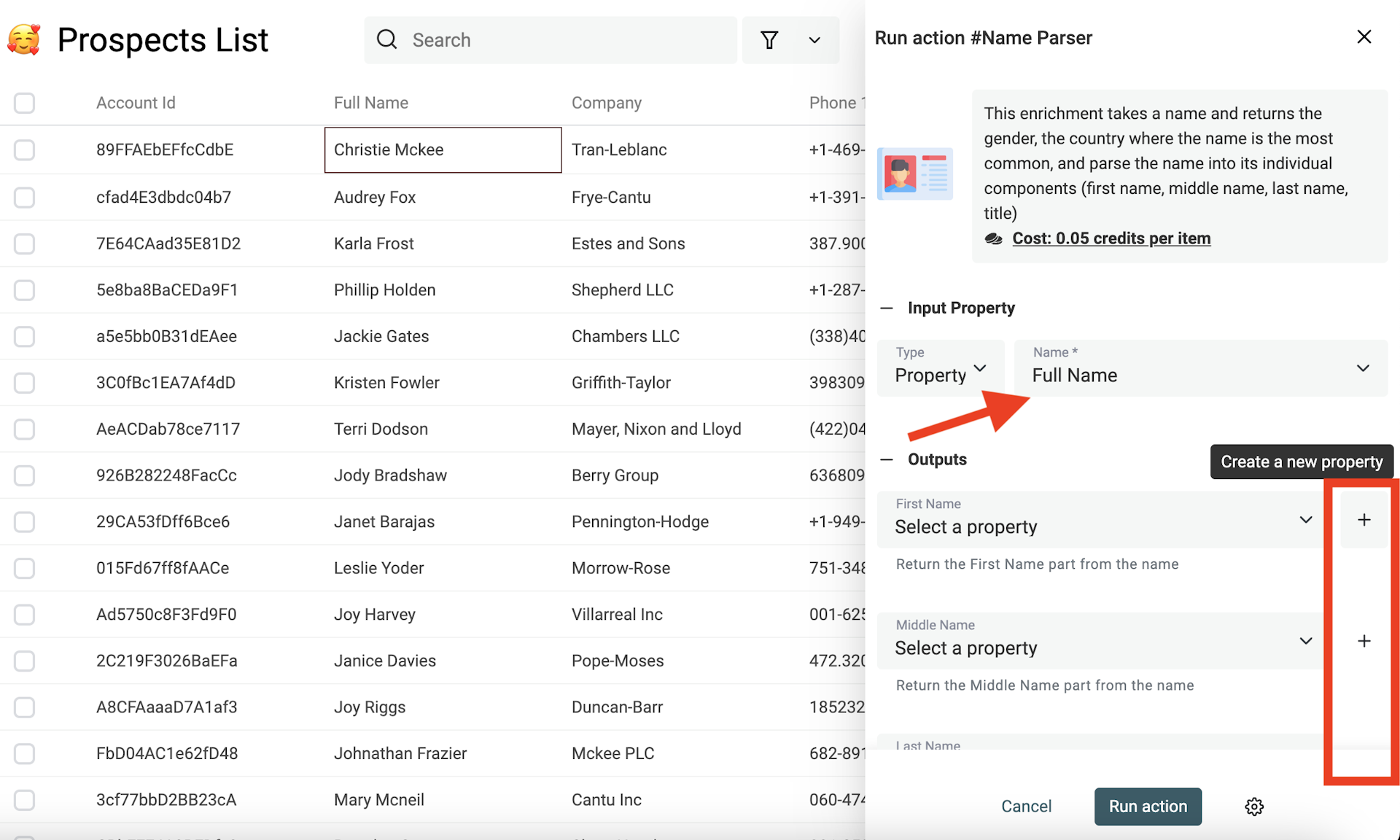

Datablist is more than a data-cleaning tool and provides data enrichment. Examples of enrichment are lead enrichments, or csv translations using Deepl.

The Name Parser is a perfect enrichment for cleaning people's names. It takes a full name and returns its parts: first name, middle name, last name. And return the most common gender and country for the name.

It uses statistical data to split the full names.

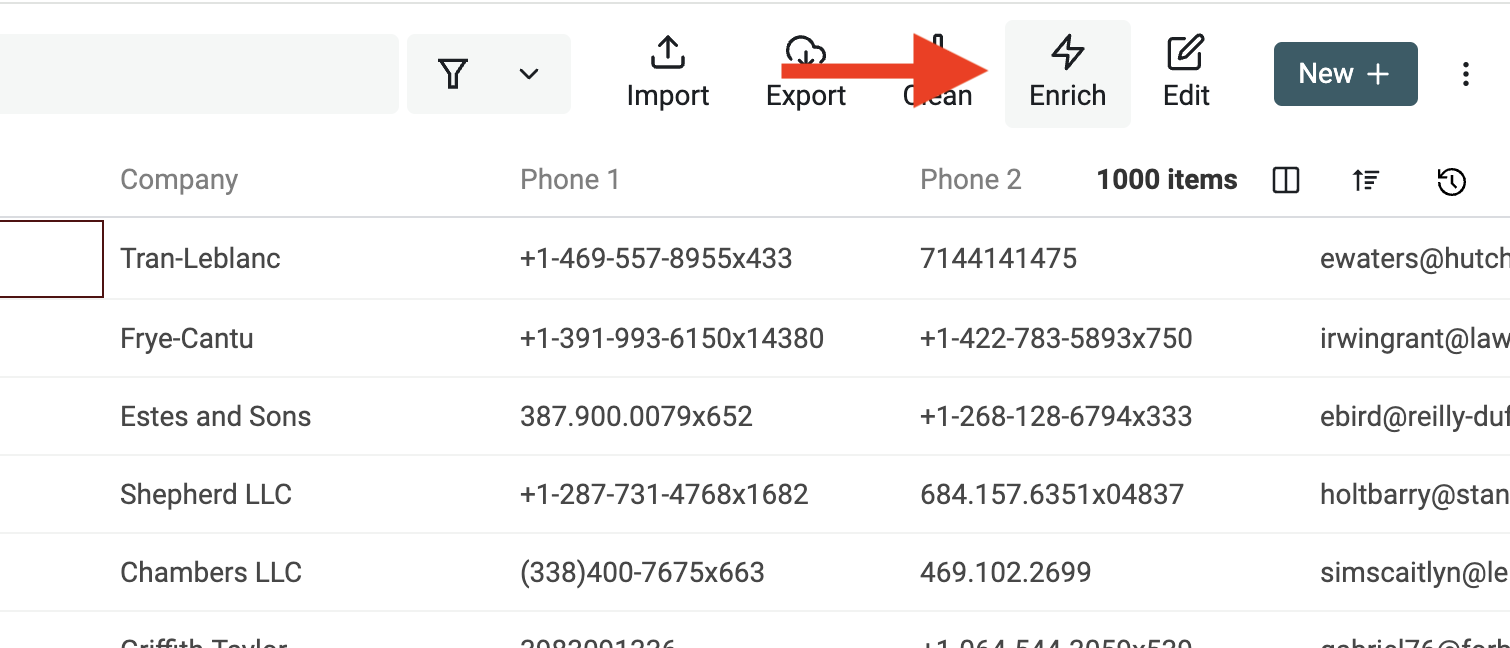

To use it, open the "Enrich Menu" from the top buttons.

Then select "Name Parser".

Then, select the property with name values. And map or create new property to store the results of the parsing. The full name property won't be edited, only the output properties will be edited with the results.

Normalize company names

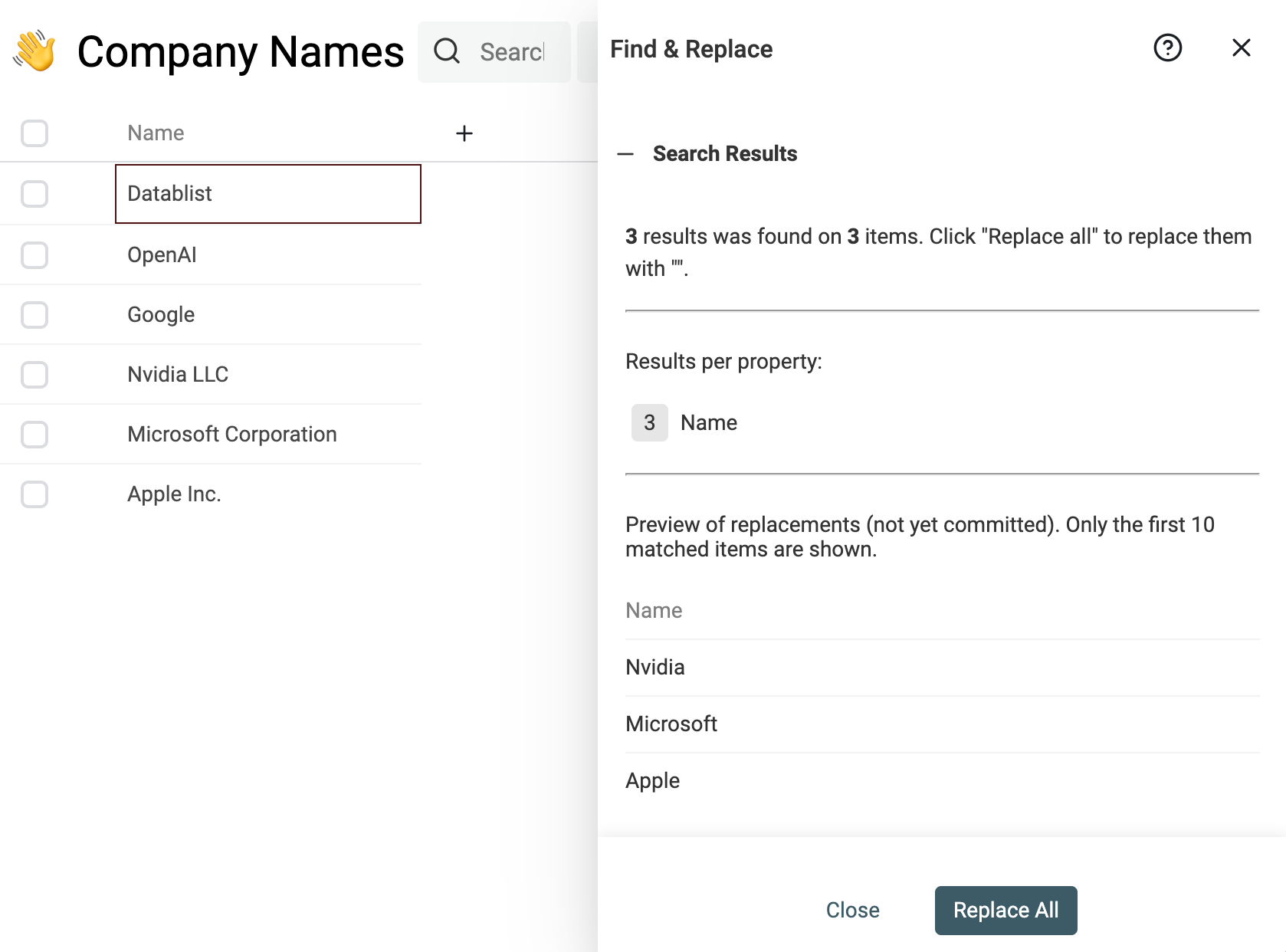

You can remove noise from company names as well. If you have prefixes, suffixes, stop-words, regional addenda, or others that prevent good matching.

An example of cleaning is removing company name suffixes such as "Inc." or "GmbH".

Use this regular expression in the Find & Replace tool:

,?\s(llc|inc|incorporated|corporation|corp|co|gmbh|ltd).?$

And replace it with an empty string.

Normalizing company names and addresses across all datasets is important to bring them into a standard format.

Normalize street names

If you are doing Data Matching on postal addresses, street name normalization is crucial. Addresses can be written with abbreviations, directional prefixes, or numeral suffixes. Without normalization, the same street can appear multiple times under different representations, making data matching a challenging task.

For example: Main 9 St, Main 9TH St., and Main 9th Street refer to the same street name. Or Washington Blvd and Washington Boulevard.

Using fuzzy algorithms to deal with those differences is inefficient. Several letter changes are needed between Washington Blvd and Washington Boulevard. And the similarity distance calculated using fuzzy-matching algorithms would be high.

A better way to deal with variation in street names is to normalize your street names. A consistent format ensures consistency.

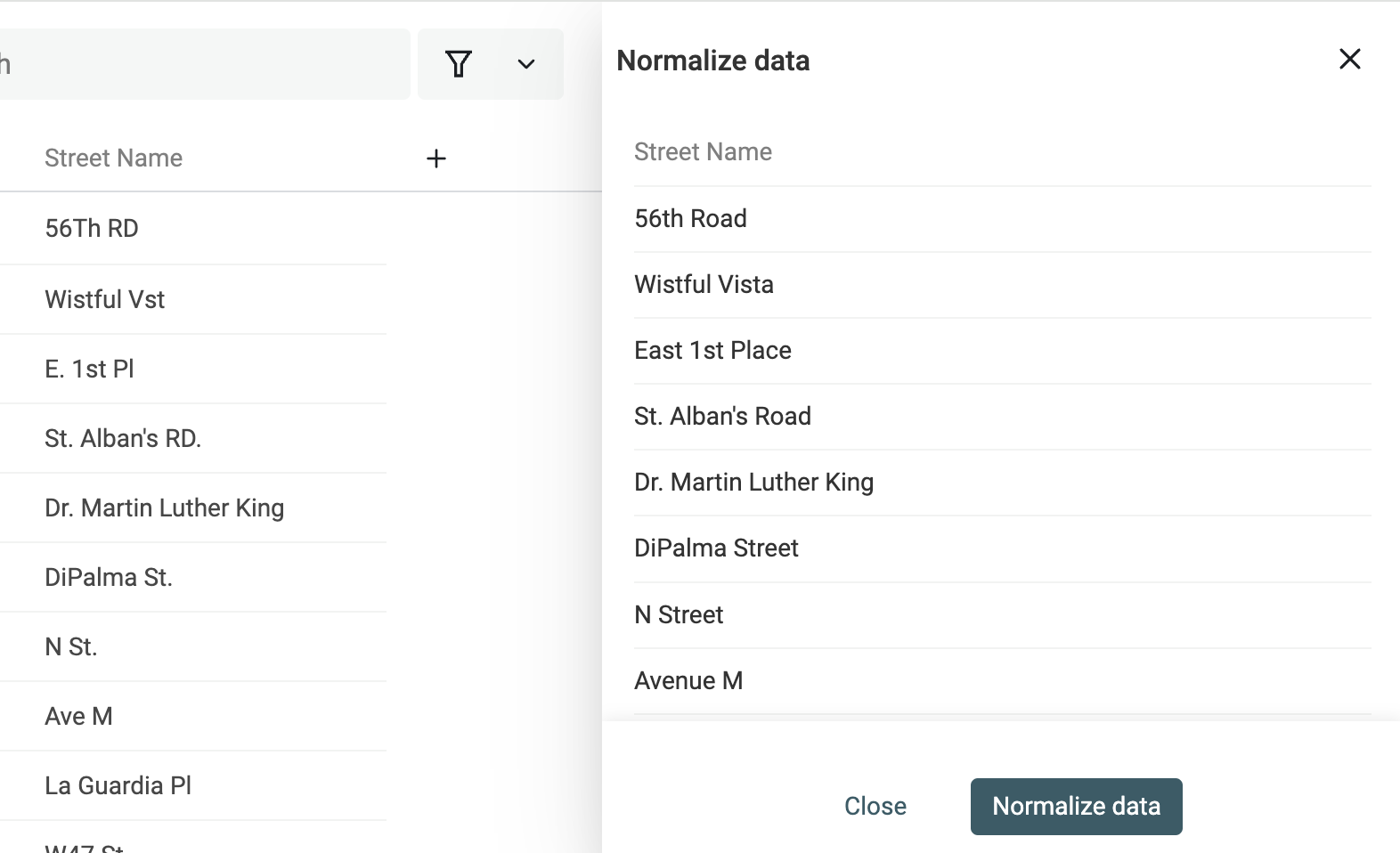

Datablist offers street name normalization for English street name formats. It normalizes abbreviations, street numbers, etc.

Notes

The street name normalization works for split addresses. The street name information must be in a distinct property. Full address values would not work.

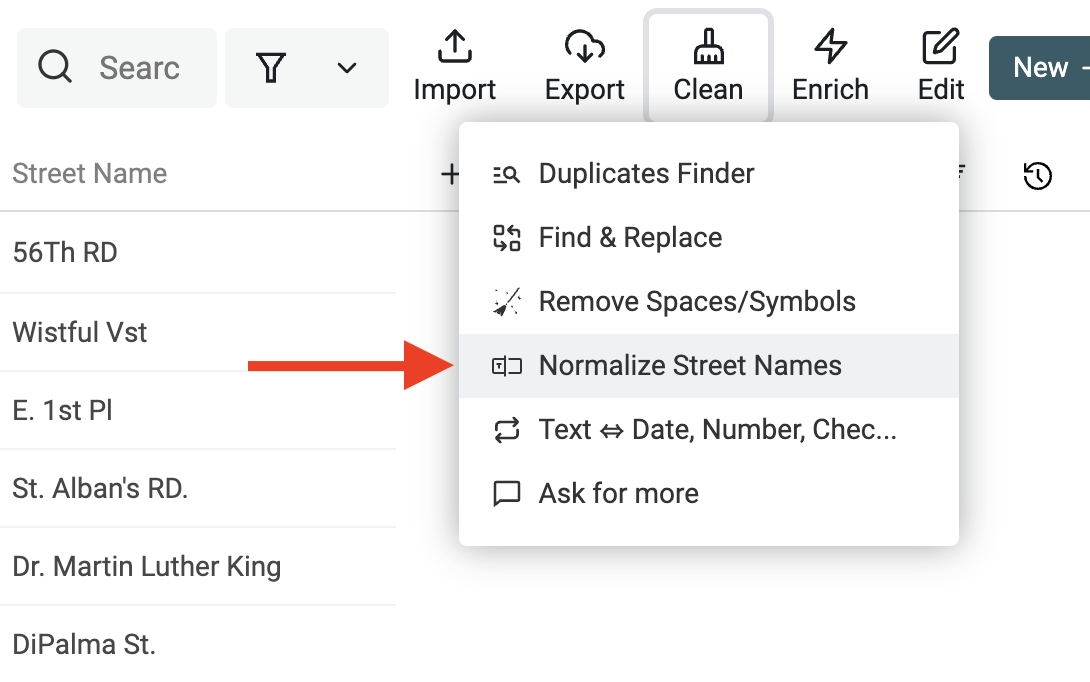

Click on "Normalize Street Names" from the "Clean" menu.

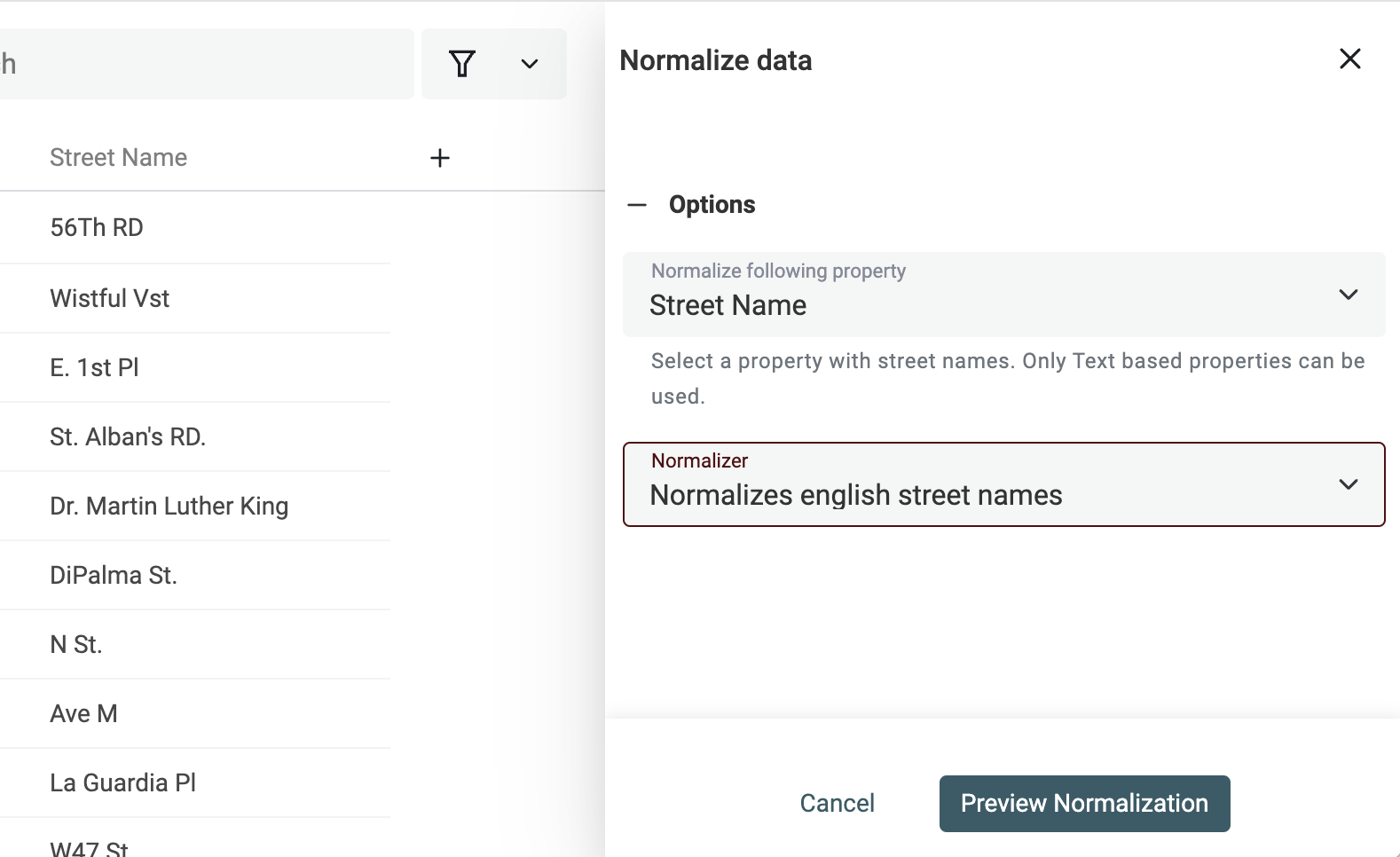

Then, select the property with street names, and pick "Normalize english street names".

Check the preview for the changes, and click "Run".

Step 3: Match records within or across several data collections

Now that your data is clean and normalized, it's time to move to the data-matching step. In this step, we want to group similar records together.

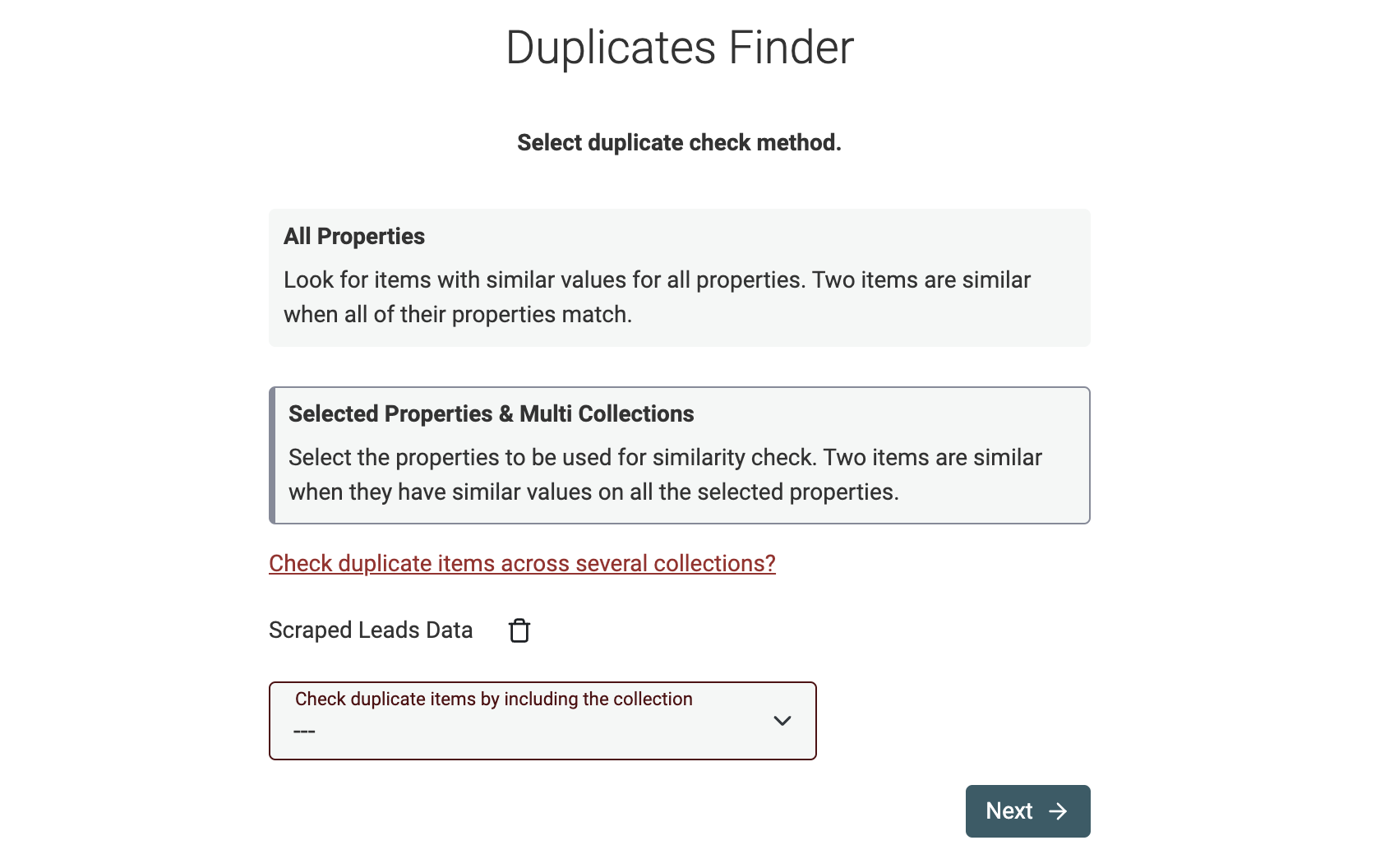

Datablist has two ways to compare records:

- Selected properties comparison - This is the most used mode. You define the properties to be compared. This mode is compatible with multi-collection matching.

- All Properties comparison - In this mode, Datablist Duplicates Finder identifies and removes records that are exactly the same. They must have the same data for the same properties. If a property has an empty value, records will not be matched.

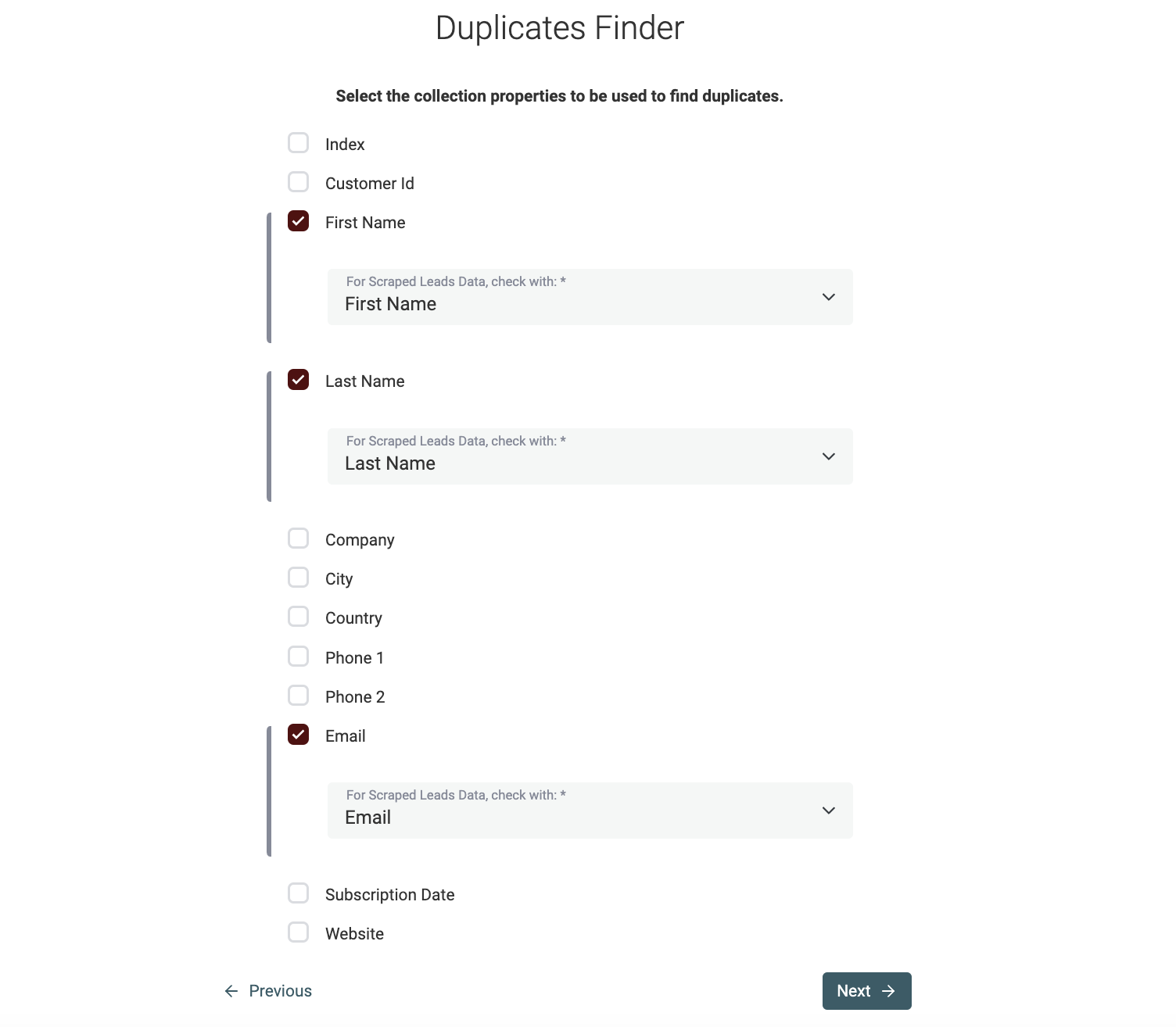

Select properties to match

For the rest of this guide, we'll be using the "Selected Properties & Multi Collections" mode.

The next step is to select the properties that will be used for data matching. If you have selected multiple collections in the previous step, you will be asked to select a mapping property for each collection.

Notes

Datablist will try to auto-map your properties across collections using their name.

Select matching algorithm

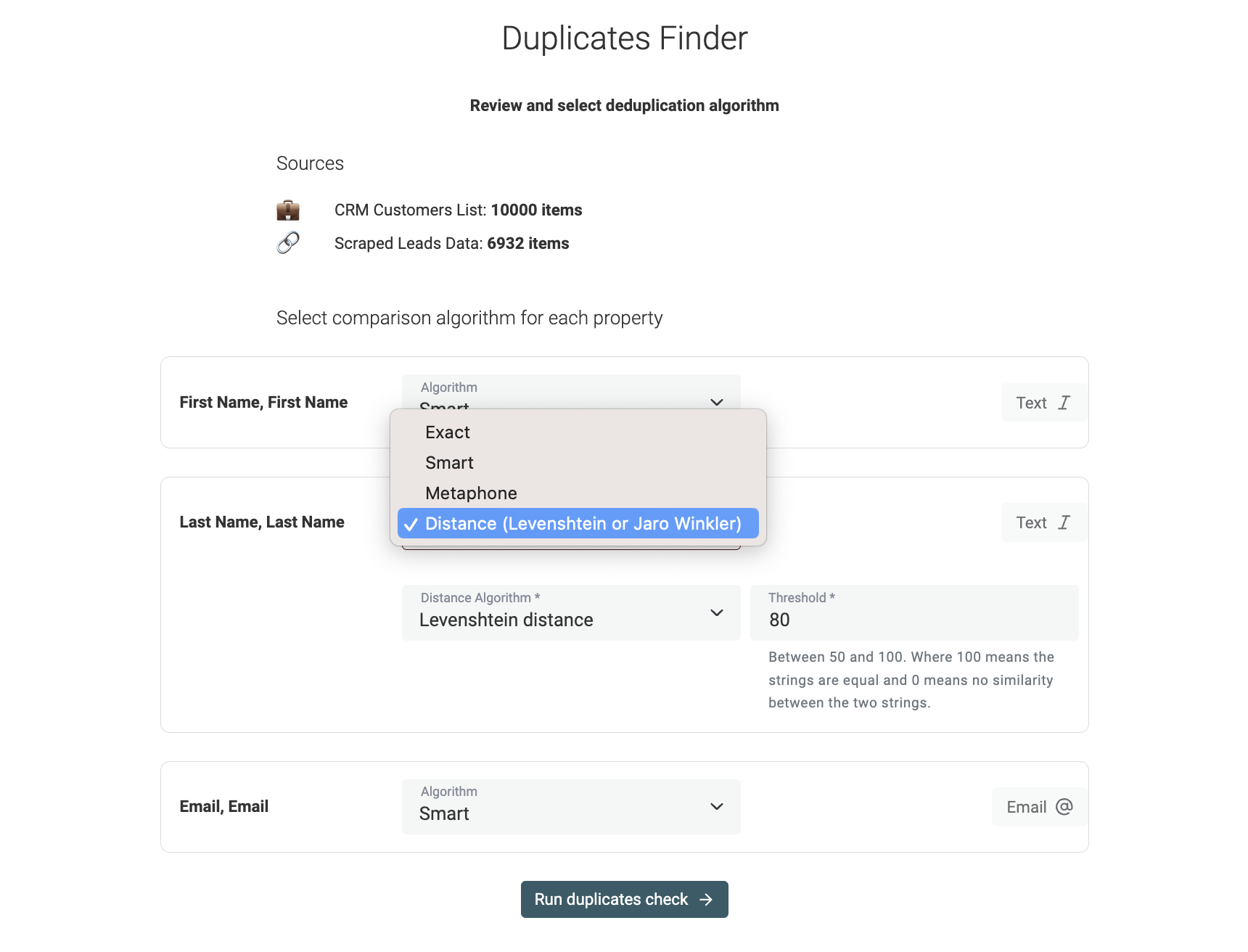

In the following step, selected properties are listed and you need to configure comparison algorithms.

Datablist implements the following matching algorithms:

-

Exact - The exact algorithm is the recommended algorithm for non-text properties such as DateTime, Number, Boolean, etc. When used for text properties, an option lets you decide if the comparison should be text-sensitive. The exact algorithm removes leading and trailing spaces from texts.

-

Smart - The smart algorithm preprocesses your items to match data with slight variations. It will match URLs with different protocols. It also deals with word order and punctuation. "John-Doe" and "Doe John" will match.

-

Phonetic with Double Metaphone algorithm - Datablist implements the Double Metaphone algorithm for phonetic matching. This algorithm converts words to codes representing their pronunciation. Two similar-sounding words will get the same Double Metaphone code.

-

Fuzzy matching with distance algorithms - Datablist also implements fuzzy matching with the Jaro-Winkler and Levenshtein distances. When selected, you must set a similarity threshold. The higher the threshold, the less variation will be allowed.

Check our documentation for more details on the matching algorithms.

Notes

- Smart, Phonetic, and fuzzy algorithms only apply to text-based properties (including Email, Text, LongText.).

- URL properties are only compatible with Exact and Smart algorithms.

Step 4: Remove or merge matching groups (deduplication)

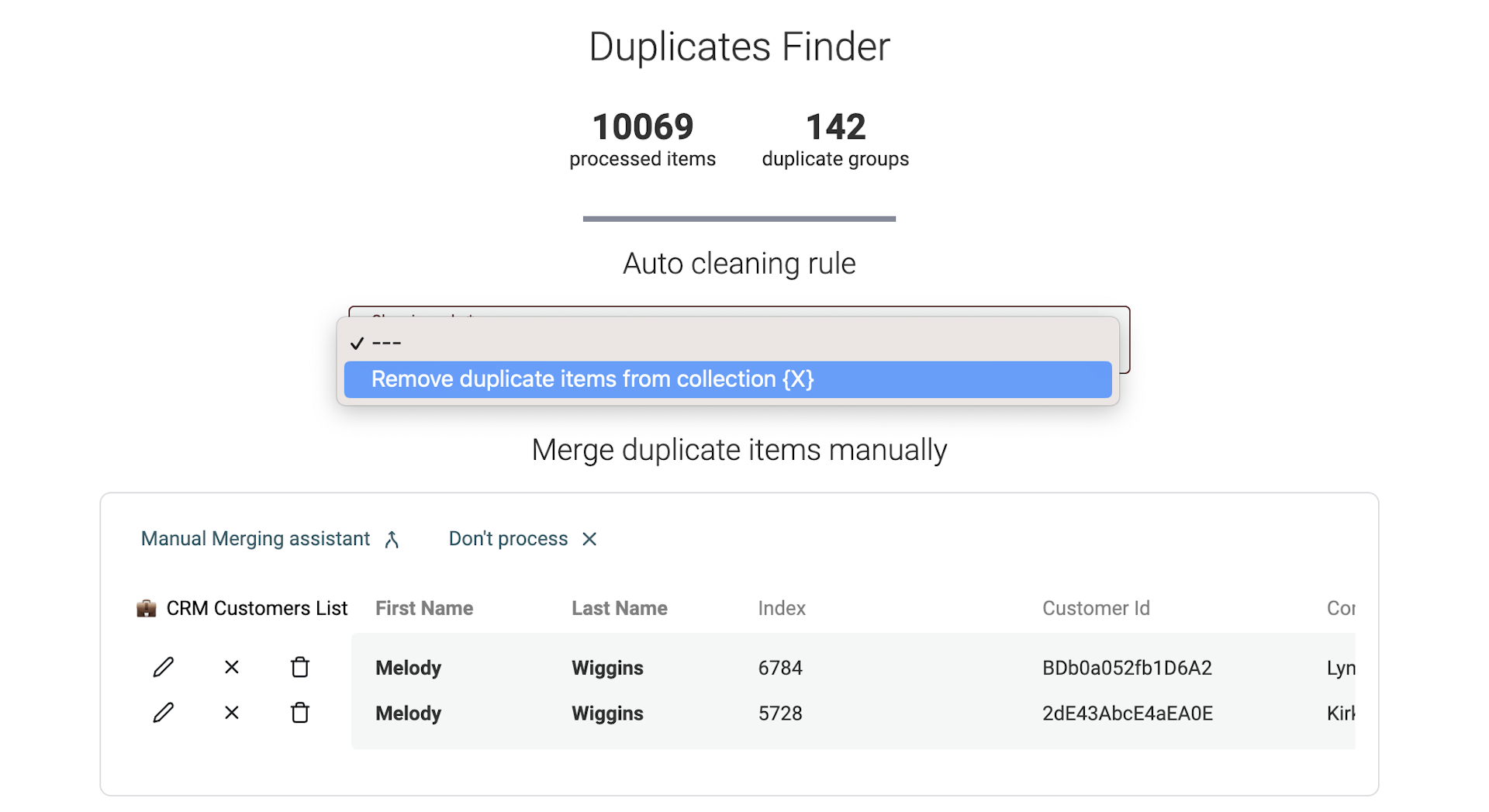

Datablist Duplicates Finder returns a list matching groups within a few seconds.

Automatic merging for single collection deduplication

Datablist Data Matching tool provides an advanced algorithm to merge your duplicates. It provides two modes for dupes merging:

- Non-conflicting items merging. (see below)

- Merge conflicting items using text concatenation or value dropping (see below)

Notes:

Please note that this feature is only available on single collection deduplication. With multi-collection deduplications, the data structure might be different between your collections.

Auto-Merging without data conflicts

Datablist will automatically find all duplicates that can be merged without losing any information.

It works as follows:

- If all the duplicate items have the same property values, only one item will be kept and the others will be deleted.

- If the duplicate items are complementary, the item with the most information will be selected as the primary item and its property values will be filled using other item property values. Then all items except the primary item will be deleted.

- If duplicate items have conflicted property values, items will be skipped for manual merging.

Auto-Merging with data conflict resolution

When running the Auto-Merging algorithm, Datablist Duplicates Finder automatically detects conflicting properties. A conflict is when two items have different values for a property. To be able to merge them, you must choose between two options:

- Combine properties values - The combine option lets you concatenate values using a delimiter. For example, if two different "Phone" values exist for a similar record, you can concatenate the two using a semi-colon. This option is perfect for email addresses, phone numbers, notes, etc.

- Drop properties values - For non-text properties, it's possible to keep only one value and drop the conflicting one. For example, if two Datetime values conflict, it's not possible to concatenate them. In this case, you must keep only one. This option is also useful to deal with external identifiers. When you are cleaning data for your CRM, the external account ID must identify a record on the CRM and it can't be a concatenated string.

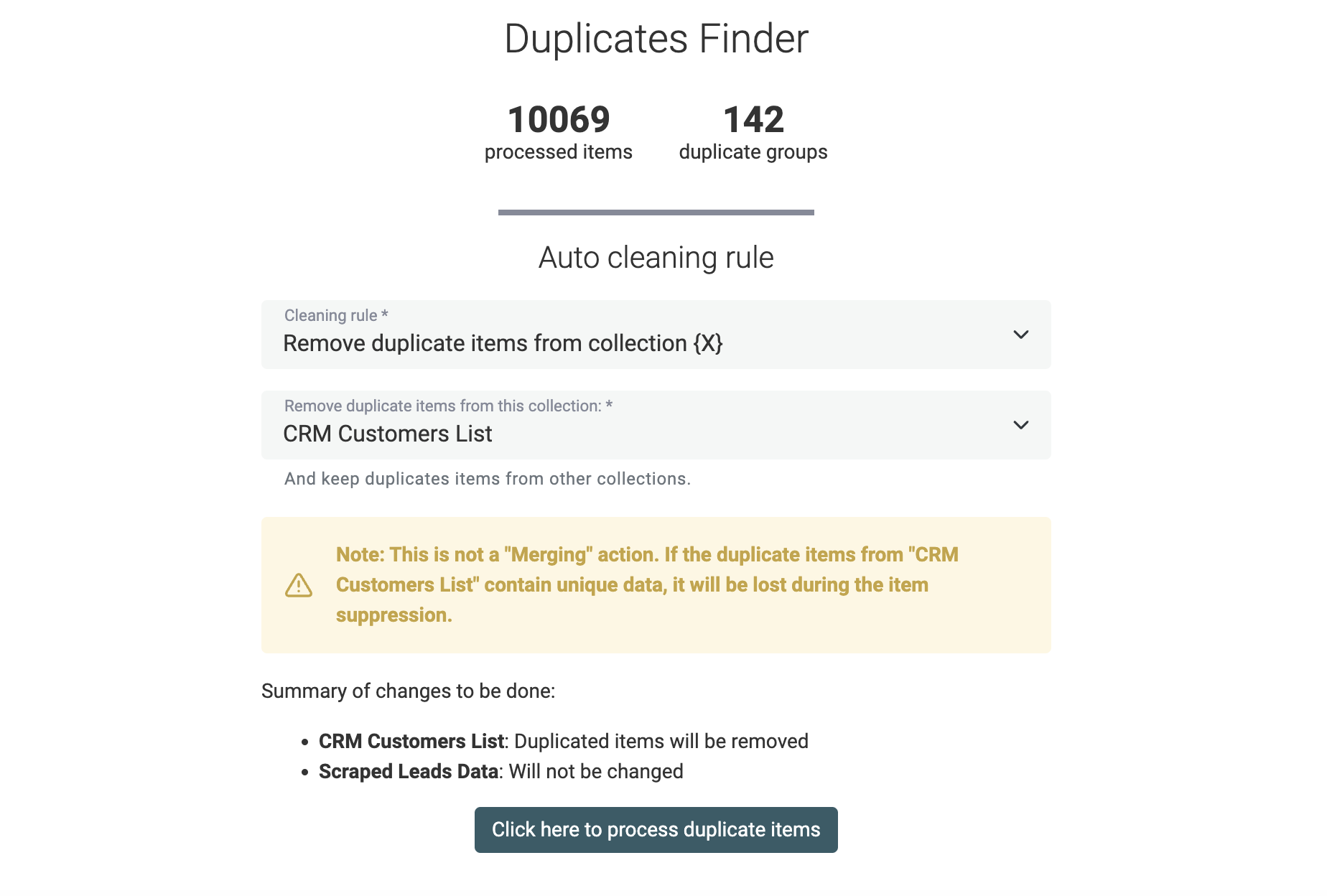

Cleaning rules for multi-collection data matching

When you run Datablist Data Matching tool on several datasets, the auto-merging feature won't be available. Your collections might have different data structures with different properties.

Instead, Datablist offers a cleaning feature to remove duplicate items in all except one collection. Use this tool to ensure item uniqueness across your datasets.

A preview of the changes to be done is displayed before running the cleaning algorithm.

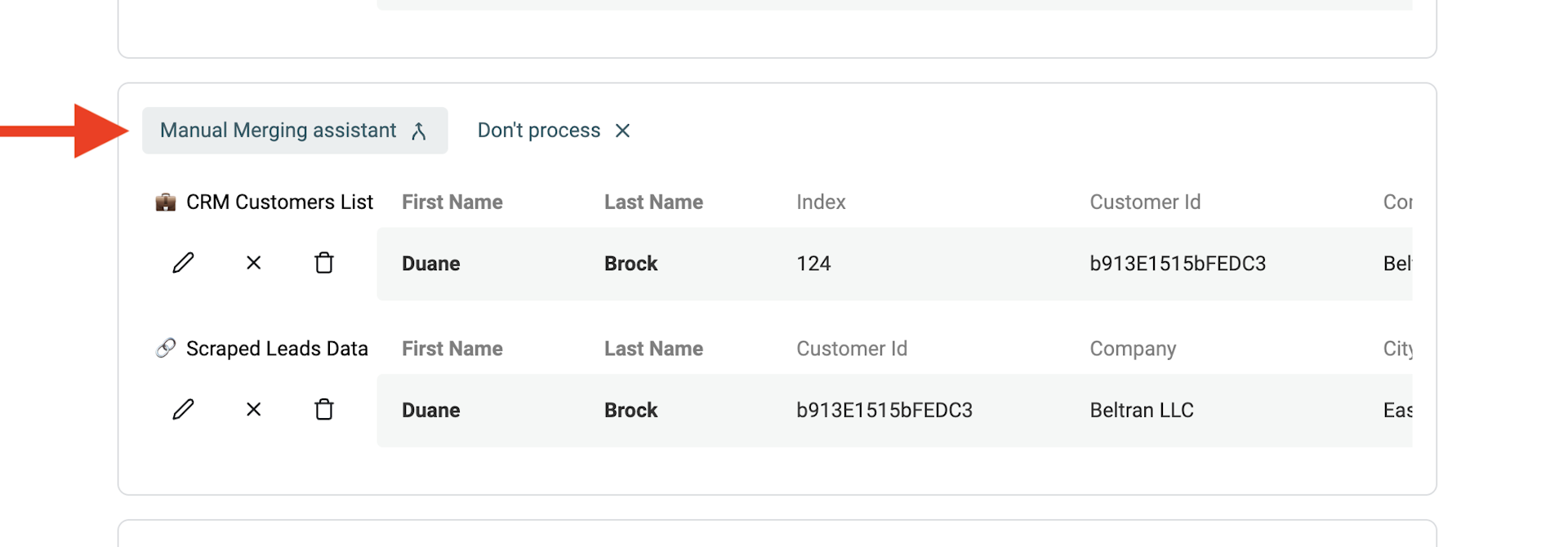

Manual merging with the Merging Assistant

For the remaining duplicate records, a manual merging assistant is available.

To merge duplicates, click on the "Manual Merging Assistant" button on the left of every duplicate group.

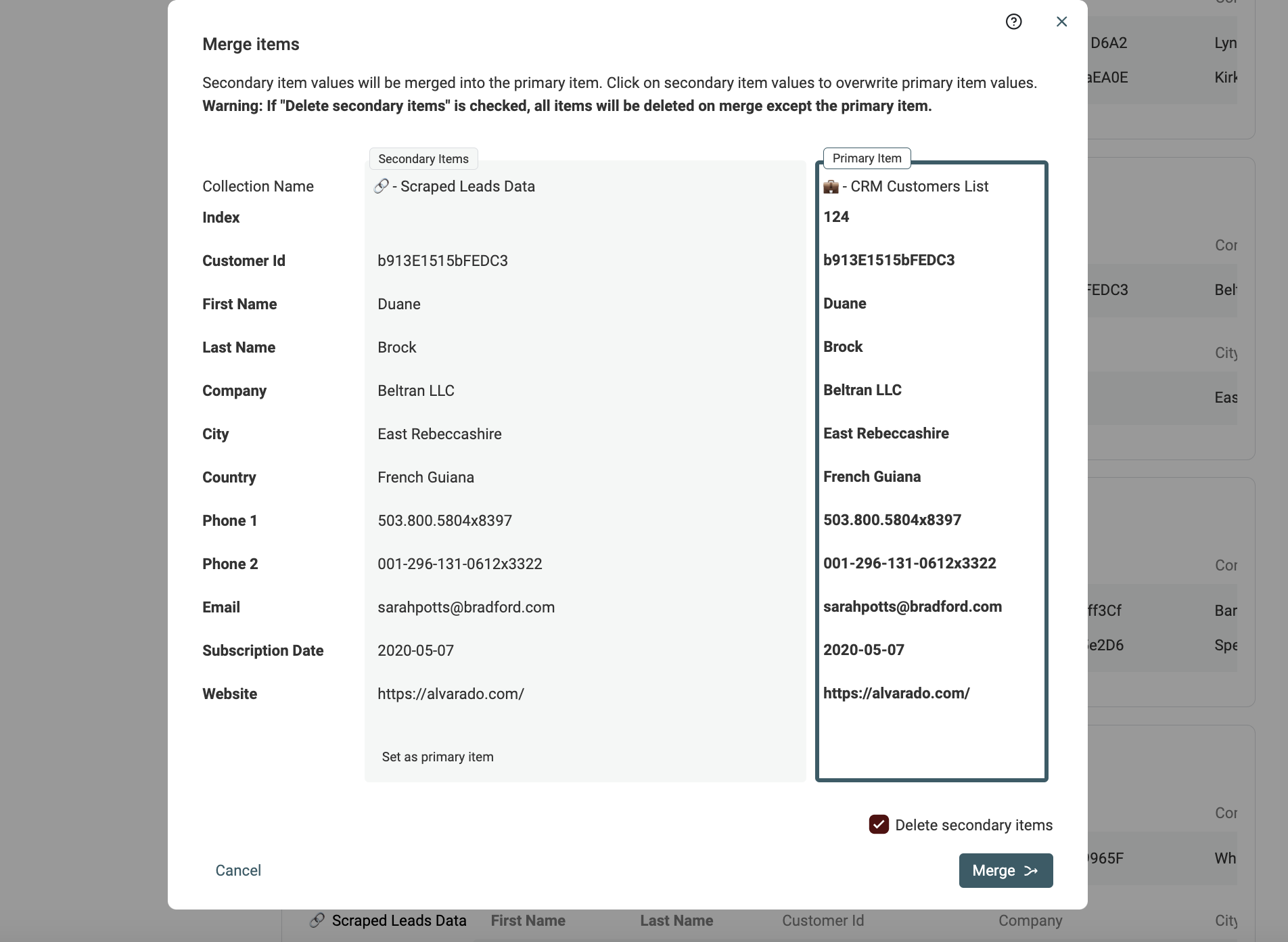

It opens a merging tool. On the right, a "Primary Item" is shown and on the left, the remaining duplicate items are called "Secondary Items". Datablist elects the item with the most data as the "Primary item".

When possible, property values from secondary items are auto-selected to be merged into the primary items. If several values conflict, you will have to make a decision and select which value to keep.

If the resulting "Primary item" suits you, click the Merge button to confirm the merge process. All the secondary items will be deleted to keep only one combined item.

Notes The manual Merging Assistant is available in multi-collection deduplication when the collection has a similar data structure (same properties).

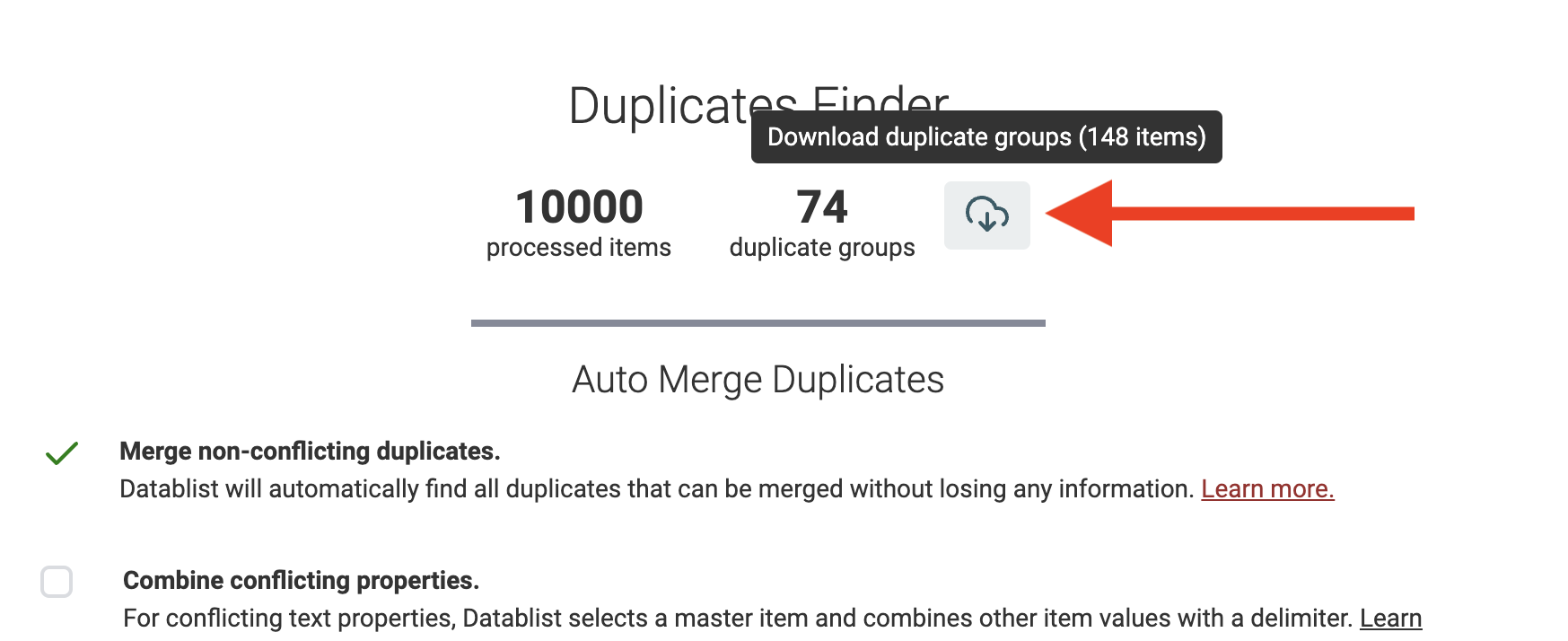

Download duplicate groups to merge with an external tool

Finally, Datablist Data Matching tool offers an export of the duplicate groups detected. It lets you export a CSV or Excel file with all duplicate items listed consecutively.

Use the export file to clean your records with another tool (for example a spreadsheet tool), or to run more complex analysis.

FAQ

What is data matching?

Data matching, also known as record linkage or deduplication, is the process of identifying and linking related records within or across datasets. Its primary objective is to enhance data quality, accuracy, and consistency by recognizing and consolidating duplicate or similar entries representing the same entities, individuals, or objects.

This process is helpful for cleaning datasets that get duplicate records added over time. Or to combine several datasets with similar or overlapping fields.

Data matching can use discriminative fields such as email address, website URL, or identification strings/numbers. Or a combination of non-unique attributes (such as name, date of birth, company name, or location) to generate a similarity score between records.

How fast is Datablist data-matching tool?

Datablist Data Matching tool loads your datasets in memory to perform the matching analysis. It is suited for datasets under 1 million records. And perform most data-matching analyses in less than a few minutes.

Do I need technical skills to perform Data Matching?

No. Datablist is a no-code solution made for everyone. From data analysts to marketing or salespeople.

When to use data matching?

Data matching finds extensive applications across diverse fields, including finance, healthcare, marketing, and customer management, where reliable data is essential for informed decision-making or data integration with other tools.

This process facilitates tasks such as fraud detection, user profile consolidation, and data enrichment from multiple sources.

What's next?

If you're interested in data cleansing, you'll like the following guides: