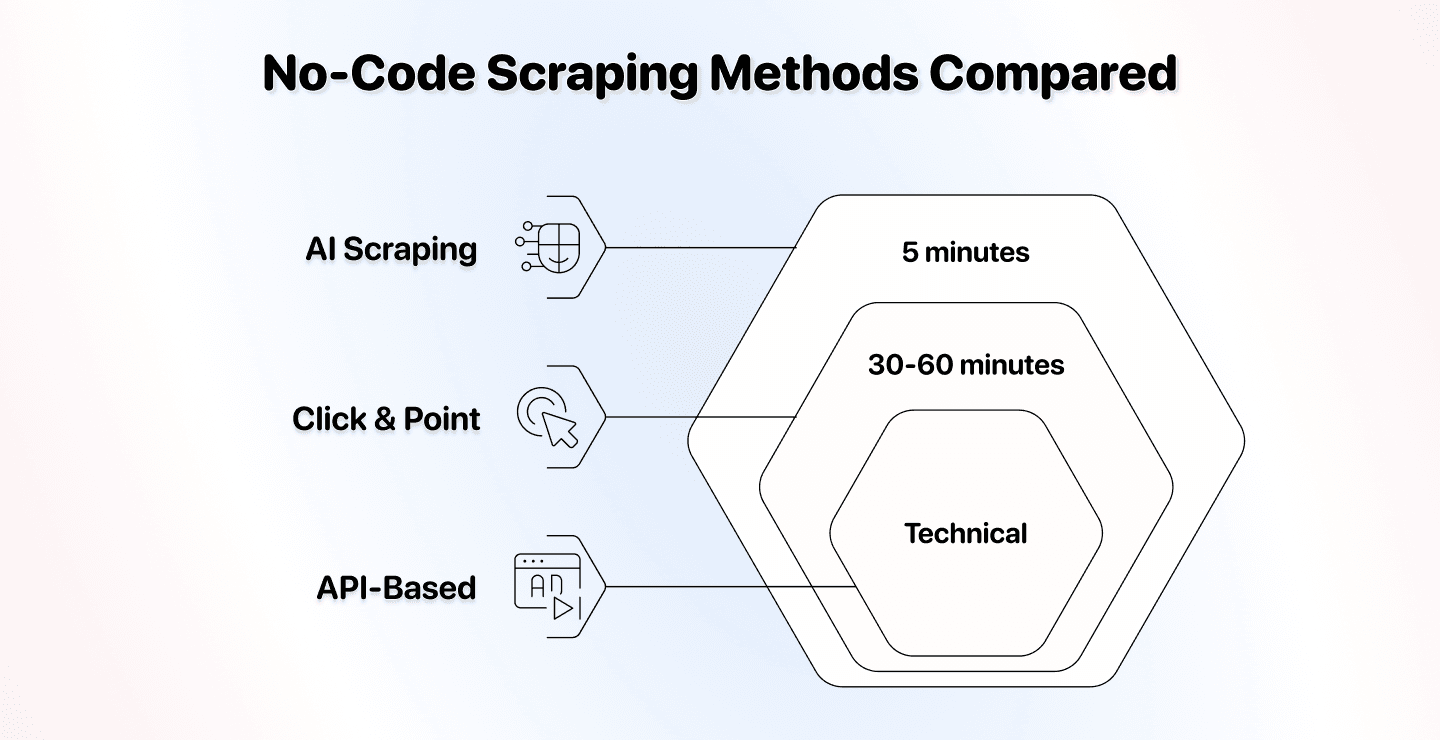

The no-code scraping world has three distinct methods, and picking the wrong one will cost you a lot of time and money.

We compared AI scraping, traditional no-code scraping, and API-based scraping to show you the real differences. No fluff, just the facts about setup time, flexibility, price, and best use cases.

By the end of this guide, you'll know exactly which method fits your needs.

📌 Summary For Those In a Rush

This article compares three no-code scraping methods to help you choose the right one for your specific needs.

The Question: Which no-code scraping method should you use for your projects?

What We Compared: AI scraping, traditional click-and-point tools, and API-based scrapers across setup difficulty, flexibility, price, use cases, and best tools.

The Quick Answer:

- AI scraping is easiest for non-technical users and adapts to website changes

- Click-and-point tools work best when you need precise control, and websites rarely change

- API-based scraping is most cost-effective but requires technical knowledge

What You'll Learn: How each method works, what makes them different, when to use each one, and which tools deliver the best results.

What This Article Covers

- AI Scraping: The Method That Understands Natural Language

- Traditional No-Code Scraping: Click-and-Point Tools

- API Based Scraping: The Technical Middle Ground

- Our Bottom Line on Which Method to Choose

AI Scraping

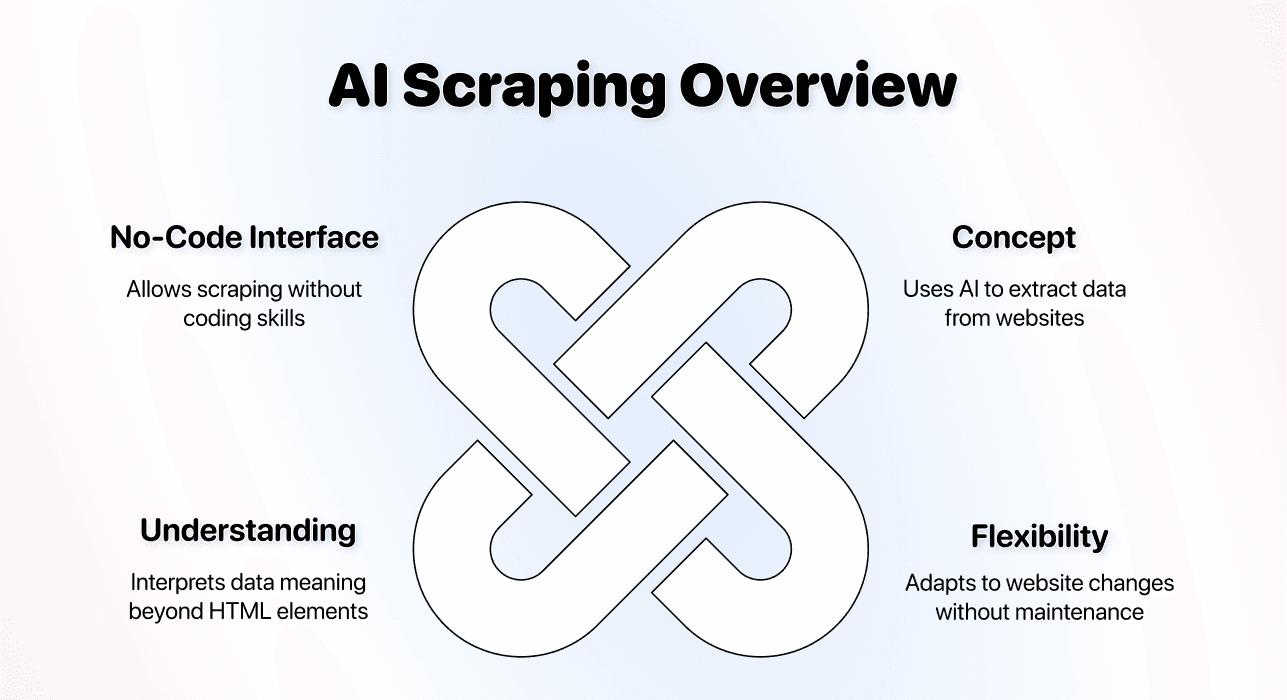

AI scraping represents the newest form of no-code data extraction. It uses artificial intelligence to understand what you want and figures out how to get it.

The Concept

AI scraping tools use large language models and machine learning to extract data from websites. You describe what you want in plain English, and the AI handles the technical details.

People call it different things (AI no-code scraping, AI data scraping, AI web scraping), but they all refer to the same concept: using AI tools to scrape websites without writing code or configuring technical selectors.

Here's what makes it different:

↳ Traditional scrapers follow rigid rules you create

↳↳ AI scrapers understand context & adapt to changes

↳↳↳ This means less maintenance and way more flexibility

The AI doesn't just look for specific HTML elements. It understands that "product price" means finding the cost of an item, regardless of how the website structures that information.

Setup Difficulty

AI scraping has the easiest setup of all three methods.

The typical workflow:

- Select your AI scraping tool

- Enter the website URL

- Describe what data you want in natural language

- Run the scraper

Time investment: 5 minutes for most websites[1]. You don't need to understand HTML, CSS selectors, or website architecture. The AI figures out where to find the data based on your description.

Here’s a video showing me using an AI scraping agent to scrape an e-commerce website in 6.04 minutes 📺

The main skill you need: Clear communication. If you can describe what you want, you can set up AI scraping in a few minutes.

A Few AI Scraping Templates You Might Like

We often create scraping templates for our users ❤️. Here are a few you might like:

- How to scrape the YC Startup directory

- How to scrape properties from Zillow

- How to scrape real estate agents from Zillow

- How to scrape properties from AirBnB

- How to scrape businesses from Yellow Pages

- How to scrape an e-commerce shop

- How to scrape case studies from a website

These templates are also available in the Datablist app and take literally just a few clicks to start using. If you want us to create a template for you, reach out here 👈🏽

Flexibility

This is where AI scraping shines compared to other methods.

AI scraping adapts automatically when:

↳ Websites redesign their layout

↳↳ Content appears in unexpected locations

↳↳↳ Different pages use different HTML structures

Traditional no-code or code scrapers break when websites change because they look for specific HTML elements. AI scrapers understand meaning, so they keep working even when the technical structure changes, which means once you set up an AI scraper, it keeps working consistently.

Example scenario: You're scraping product information from multiple e-commerce sites. Each site structures its HTML differently. With AI scraping, you use the same prompt for all of them, and the AI scraping agent adapts to each site's unique structure.

The limitation: AI scraping works best for publicly visible data. It can't handle complex authentication flows or scrape behind login walls effectively like you could do with a custom-built scraper.

Price

AI scraping typically costs more per operation than other methods because it uses computational resources to understand and process pages.

Typical pricing models:

- Subscription plans with included credits

Cost factors:

- JavaScript-heavy sites cost more (they require rendering)

- Pagination and multi-step tasks increase costs

- Simple directory pages cost less

Real-world example: Scraping 1,000 business listings from a directory might cost 500-1,000 credits in most AI scraping tools. The exact cost depends on page complexity and how much data you extract.

Is it worth the price? For non-technical users, absolutely. You're paying for time savings, zero maintenance, and most importantly: peace of mind. So you could call AI scraping also “headache-free scraping”

Use Cases and Best Practices

AI scraping works best in specific scenarios where its strengths matter most.

Best use cases:

- Scraping multiple websites with different structures

- Extracting data when you're not technical

- Projects where maintenance time is expensive

- Situations where websites update frequently

- Gathering diverse data types that require context understanding

- Don’t want to set up a traditional no-code scraper or API

When AI scraping is the smart choice: You need to scrape competitor websites for market research, but each competitor uses different website builders and layouts. AI scraping handles all of them with the same prompt.

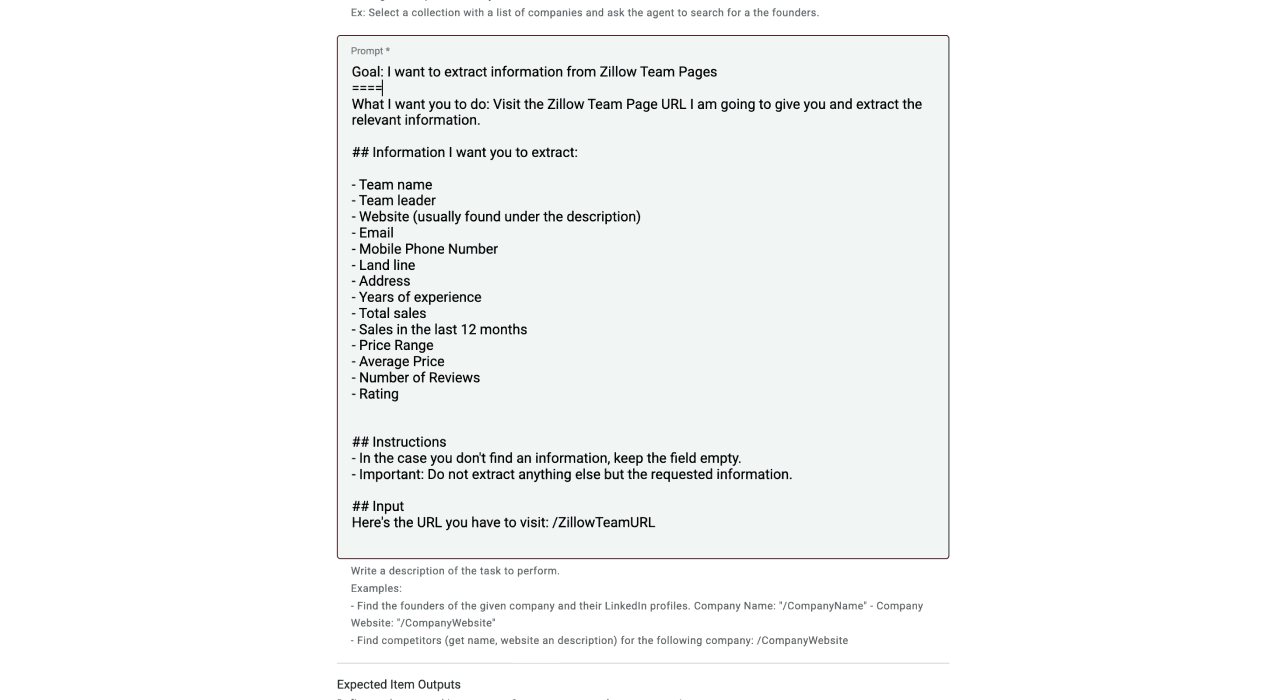

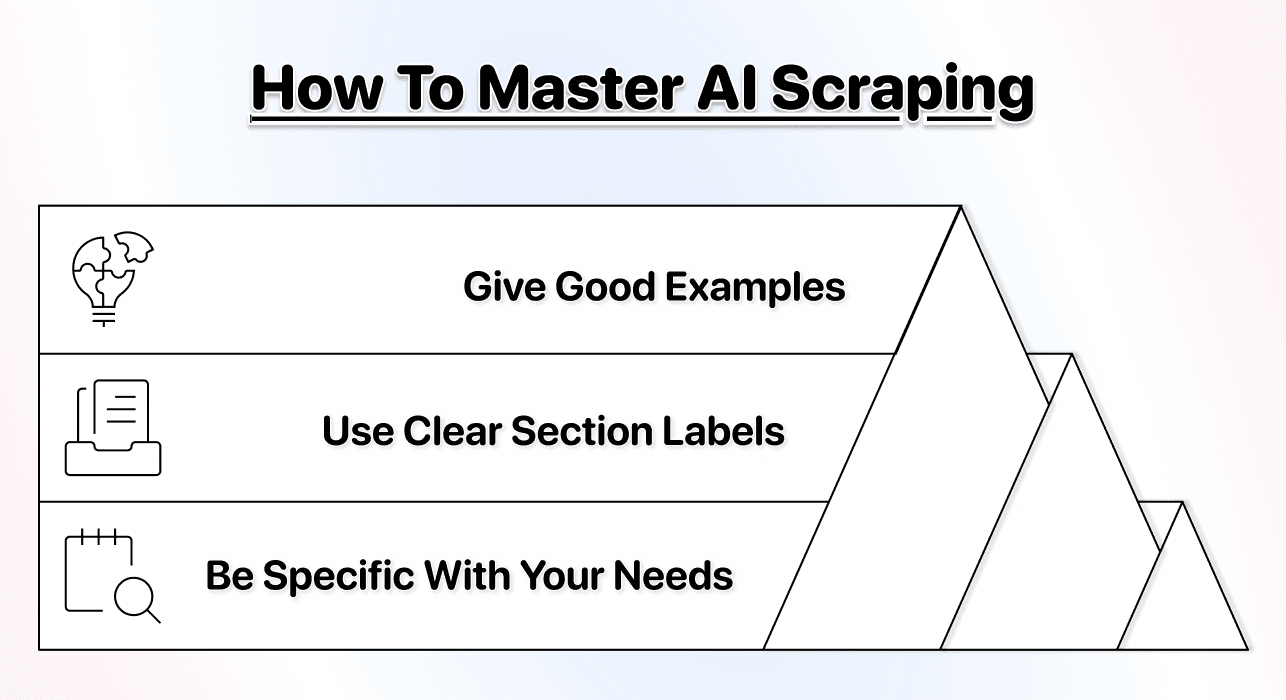

Best practices for AI scraping:

- Write clear, specific prompts about what data you need

- Provide examples when possible to improve accuracy[2]

- Start with small tests before scaling to large datasets

- Use section labels in your prompts for better results

Here’s a helpful guide in case you want to know more about how to prompt an AI agent 👈🏽

Best Tool to Use

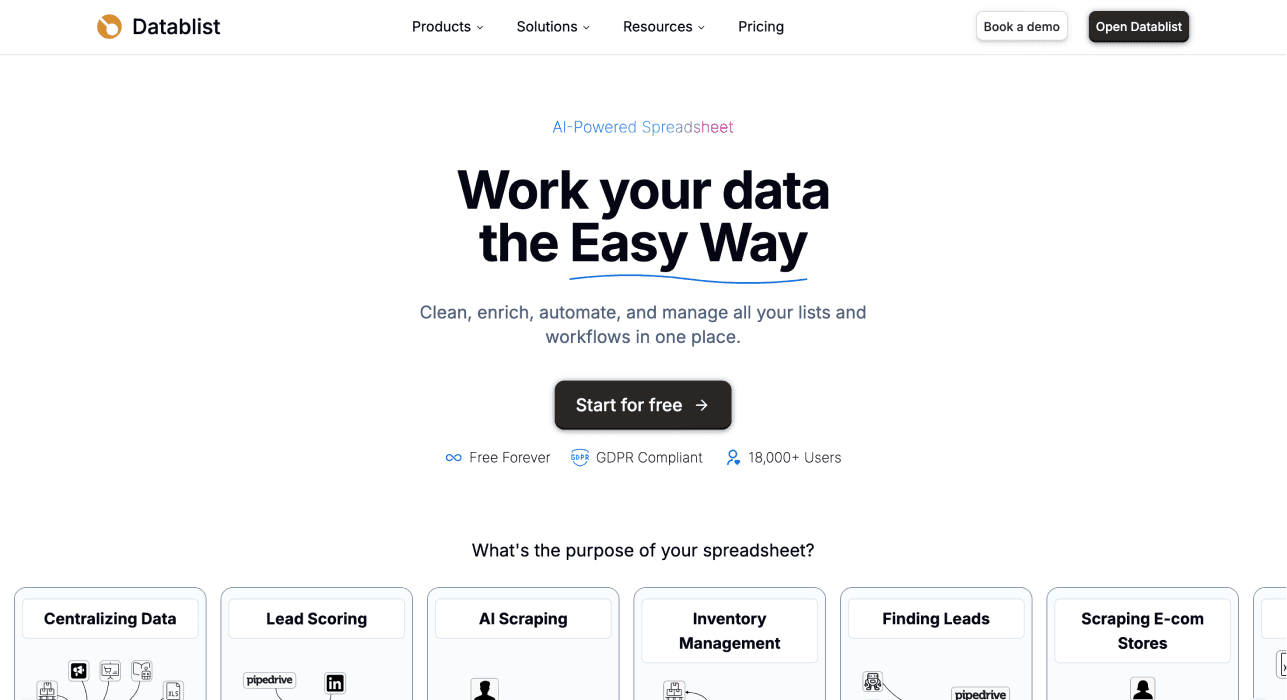

For AI scraping, Datablist stands out as the best option for non-technical users[3]

Why Datablist works:

- True natural language prompting[4] (no technical knowledge required)

- Multiple specialized AI agents for different scraping tasks[5]

- Built-in ecosystem with 60+ lead generation tools

- Handles JavaScript rendering and pagination automatically[6]

- Affordable pricing starting at $25/month

- Has built-in bulk enrichment capabilities

What makes it different: Datablist isn't just an AI scraper. It's a complete lead generation platform that includes AI scraping alongside email finder, sales navigator scraper, and cleaning tools. You can scrape a list and immediately enrich it with contact information without switching tools.

Datablist’s main advantage: You're getting AI scraping plus a complete workflow automation platform built to support data enrichment, lead list building, or any other lead generation workflow for less than most standalone scraping tools charge.

📘 AI Scraping is Technically No-Code Scraping Too

AI scraping is technically a subcategory of no-code scraping since it requires no coding skills. It's the easiest no-code method because it only requires natural language instructions rather than understanding website structures.

Traditional No-Code Scraping (Click-and-Point Tools)

Click-and-point scrapers were the original "no-code" solution. They let you visually select data on a webpage instead of writing code. In this section, we also referred to them as “traditional no-code scraper”

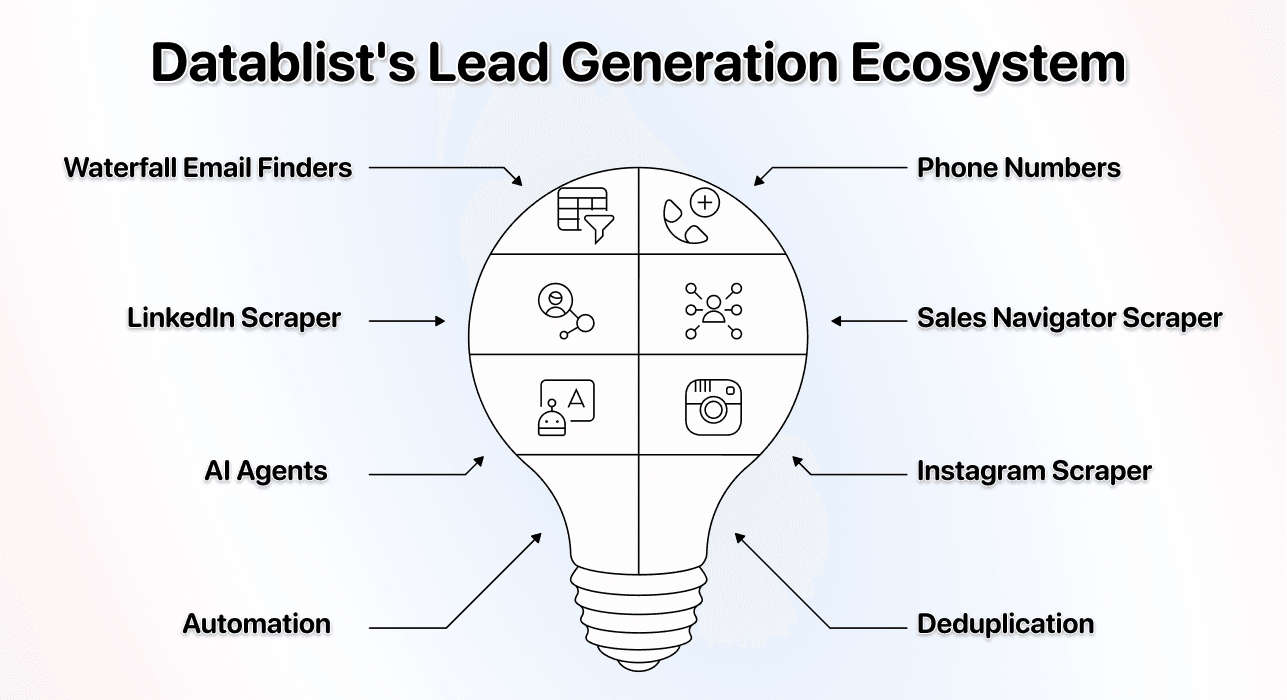

The Concept of Traditional No-Code Scrapers

Traditional no-code scraping uses visual interfaces where you click on webpage elements to tell the tool what to extract.

The basic workflow: You open the website in the tool, click on the product name, then the price, then the description. The tool records your clicks and creates a scraper based on those selections.

What's happening behind the scenes: The tool converts your clicks into CSS selectors or XPath expressions. You're not writing code, but you're still creating rigid technical rules that depend on the website's HTML structure staying the same.

Why it's called "no-code": You don't write Python or JavaScript. But you do need to understand how websites organize information and sometimes troubleshoot when elements don't get selected correctly.

Setup Difficulty

Click-and-point tools have a moderate learning curve that surprises most beginners.

The setup process:

- Download and install the tool (many are desktop apps)

- Open your target website in the tool

- Click on each data point you want to extract

- Configure pagination rules if needed

- Test to make sure the right data gets scraped

- Debug when wrong elements get selected

- Save and run your scraper

Time investment: 30-60 minutes for a moderately complex website.

❗️ Be Aware of This

There's a hidden complexity that comes with click-and-point tools: Elements don't always get selected correctly. Sometimes, clicking a phone number selects the entire contact section, and you can't fix that since the root cause is in the website itself, not the scraper. If you ever encounter this issue, you'll have to clean the data after scraping.

Common beginner frustrations with traditional no-code scrapers:

- Clicking one element selects something completely different

- Chatting with support teams becomes a routine

- Pagination doesn't work as expected

- Data appears mixed together instead of in separate fields

- Scrapers break after website updates

Who finds it easy: People comfortable with technology and willing to watch tutorials can master click-and-point tools. Expect to spend a few hours learning before becoming productive.

Flexibility

Click-and-point tools are rigid by design. They extract exactly what you configured, exactly how you configured it.

What happens when websites change:

↳ Layout redesigns break your scraper completely

↳↳ Minor CSS updates can stop data extraction

↳ ↳↳You rebuild the scraper from scratch

The maintenance burden: Websites update constantly. Popular e-commerce sites might update quarterly. Each update means reconfiguring your scraper, which takes the same time as the initial setup.

Handling multiple websites: If you're scraping five competitor websites, you need five different scraper configurations. Each one breaks independently when that site updates.

The advantage click-and-point tools have: When websites don't change often (like government databases or stable directories), click-and-point tools provide reliable, consistent extraction once properly configured.

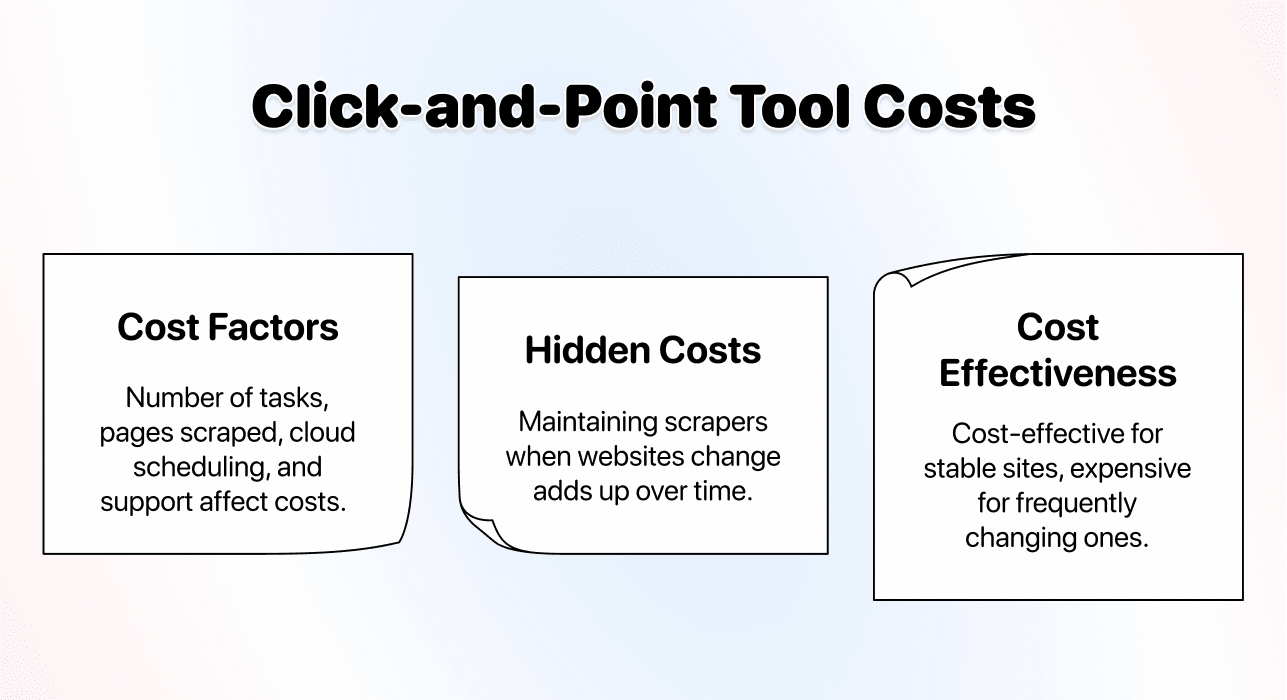

Price

Click-and-point tools typically use subscription pricing with tiered plans.

Common pricing structures:

- Entry plans: $50-100/month

- Professional plans: $150-300/month

- Enterprise plans: $500+/month

What affects your costs:

- Number of scraping tasks you can create

- How many pages you can scrape per month

- Access to cloud-based scheduling

- Priority support and advanced features

Hidden costs to consider: The time you spend maintaining scrapers when websites change adds up. If you're spending 5 hours per month fixing broken scrapers, that's a real cost, even if the subscription seems affordable.

Cost-effectiveness: For scraping websites that rarely change, click-and-point tools can be cost-effective once set up. For frequently changing sites, the maintenance time makes them expensive. In this case, you might consider using an AI scraping agent

Use Cases and Best Practices

Click-and-point tools excel in specific situations where their limitations don't matter.

Best use cases:

- Scraping stable websites that rarely update

- Projects where you need precise control over data extraction

- Situations where you're scraping the same site repeatedly

- Desktop-based workflows where cloud tools aren't necessary

When click-and-point is the right choice: You need to scrape a government database that updates daily but never changes its structure. Once configured correctly, a click-and-point tool will reliably extract new data every day, assuming the tool is capable of extracting the right data when you select it.

Best practices:

- Document your scraper configurations for when they break

- Set up monitoring to catch when scrapers stop working

- Budget time for monthly maintenance

- Test thoroughly before scaling to large datasets

When to avoid click-and-point: If you're scraping multiple modern websites that update frequently (e-commerce websites, for example), the maintenance burden becomes overwhelming. Each site update requires manual intervention.

Best Tool to Use

Octoparse is the most established click-and-point scraping tool on the market.

By the way, here’s a recent article in which I compared the best no-code scrapers based on ease of use, integrations, and pricing 👈🏽

Why Octoparse:

- Mature interface with years of development

- Extensive tutorial library for common scenarios

- Desktop application with powerful features

- Good documentation and community support

The trade-offs: Octoparse requires time investment to learn. The interface is powerful but complex. Pricing starts at $83/month, making it expensive for individuals and small teams.

Who should use it: Teams comfortable with technology who need to scrape stable websites regularly and can justify the learning curve and subscription cost.

💡 Traditional Scrapers Have Their Place

Click-and-point tools aren't bad; they just have their best days behind them now that easier methods like AI scraping exist. For old, never-changing websites like government directories, they still work well.

API Based Scraping

API-based scraping occupies the middle ground between code and no-code. It's technically no-code (you're not writing scraping logic), but it requires technical knowledge to use.

The Concept

API-based scrapers provide pre-built endpoints that handle scraping for specific websites or use cases.

How it works: You make an API call with parameters (like the URL to scrape and what data you want), and the service returns structured data. The scraping logic is already written; you're just configuring it through API parameters.

Technically, it's no-code, but you need to understand how to make API calls, handle authentication tokens, parse JSON responses, and integrate the results into your workflow. This requires programming knowledge or comfort with tools like Postman.

Common API scraping approaches:

- Website-specific APIs (like LinkedIn scraper APIs)

- General scraping APIs that work on any URL

- Template-based APIs with pre-configured scrapers for popular sites

The naming confusion: Some call it "no-code" because you're not writing scraping logic. Others call it "low-code" because you need technical skills. The reality is somewhere in between.

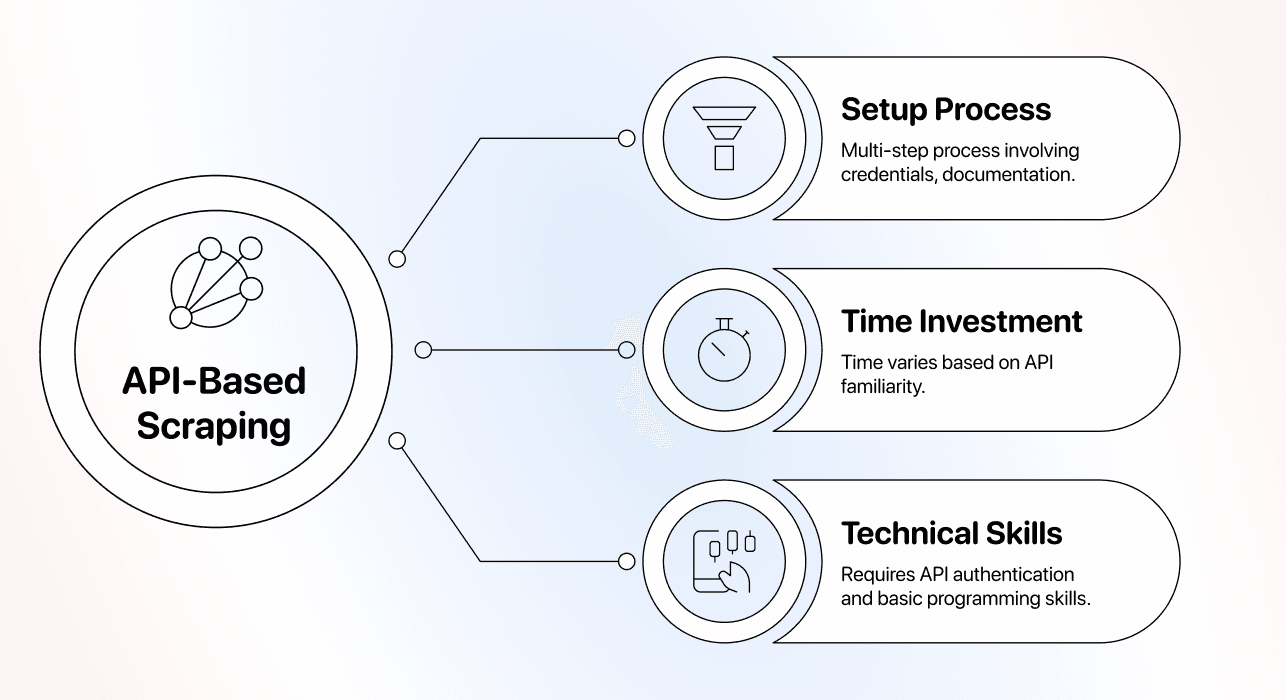

Setup Difficulty

API-based scraping requires technical knowledge that puts it beyond true "no-code" status.

The setup process:

- Sign up and get API credentials

- Read the documentation to understand the parameters

- Test API calls using a tool like Postman or curl

- Handle authentication and rate limiting

- Parse the JSON or XML response

- Integrate results into your application or workflow

- Implement error handling for failed requests

Time investment: 1-2 hours if you're comfortable with APIs, much longer if you're learning.

Technical skills required:

- Understanding REST APIs and HTTP requests

- Working with JSON data structures

- Handling authentication tokens and headers

- Basic programming to integrate results into your workflow

Flexibility

API-based scrapers offer moderate flexibility that depends entirely on the provider.

What you can control: Most API-based scrapers let you specify which data points to extract, set rate limits, choose output formats, and configure some behavior through parameters.

What you can't control: The underlying scraping logic is a black box. If the API doesn't support a specific website or data type, you're stuck. You can't modify how it works.

Website changes: Good API providers maintain their scrapers and adapt to website changes automatically. Bad providers might not update for weeks, leaving you with a broken scraper.

The dependency risk: You're completely dependent on the API provider. If they shut down, change pricing, or stop maintaining specific scrapers, your workflow breaks, and you have no recourse.

When flexibility matters most: If you need to scrape websites the API doesn't support or extract data in ways the API doesn't allow, you're out of options. In this case, a custom scraper or AI scraping might be the better choice.

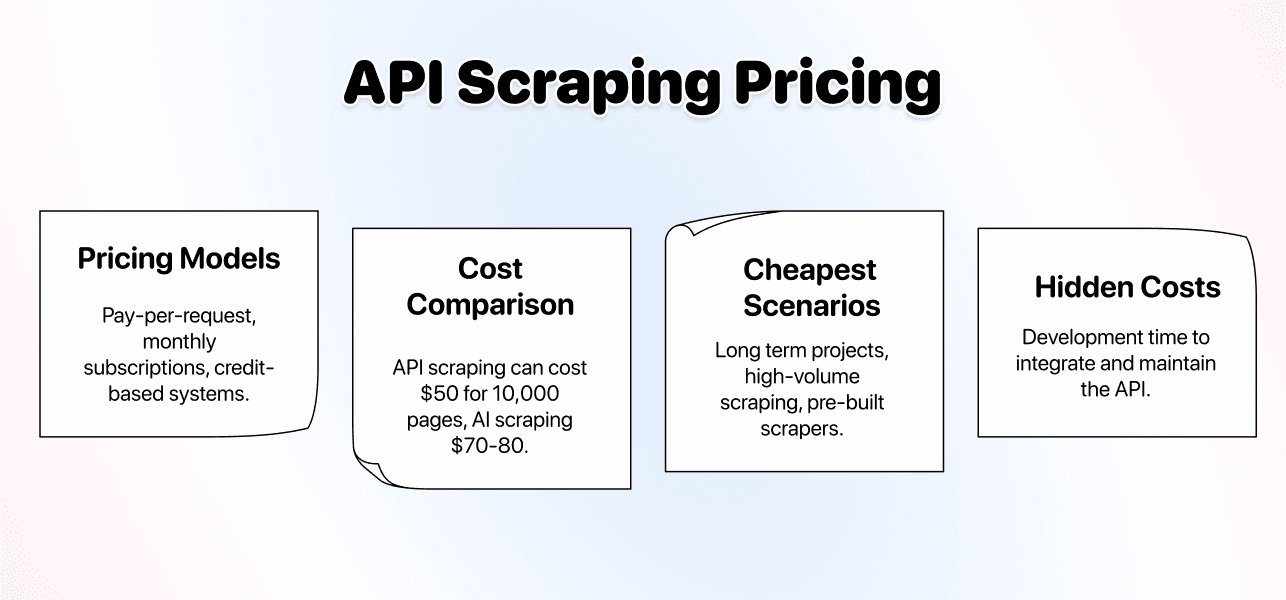

Price

API-based scraping is often the most cost-effective option for high-volume, simple scraping tasks.

Typical pricing models:

- Pay-per-request (often pennies per successful scrape)

- Monthly subscriptions with included requests

- Credit-based systems with bulk discounts

Cost comparison: For scraping 10,000 simple pages per month, API-based solutions can cost $50. The same volume with AI scraping might cost $70-80, but the setup time is much less.

When is API scraping is cheapest:

- Long term projects where development time doesn't matter

- High-volume scraping of simple, stable websites

- Using pre-built scrapers for popular sites

When it gets expensive: If you need custom scraping that the API doesn't support well, you'll waste time and money trying to make it work. The "cheap" solution becomes useless when it doesn't fit your needs.

Hidden costs: Development time to integrate the API and maintain the integration. If you're not technical, you'll need to hire someone, which changes the cost equation dramatically.

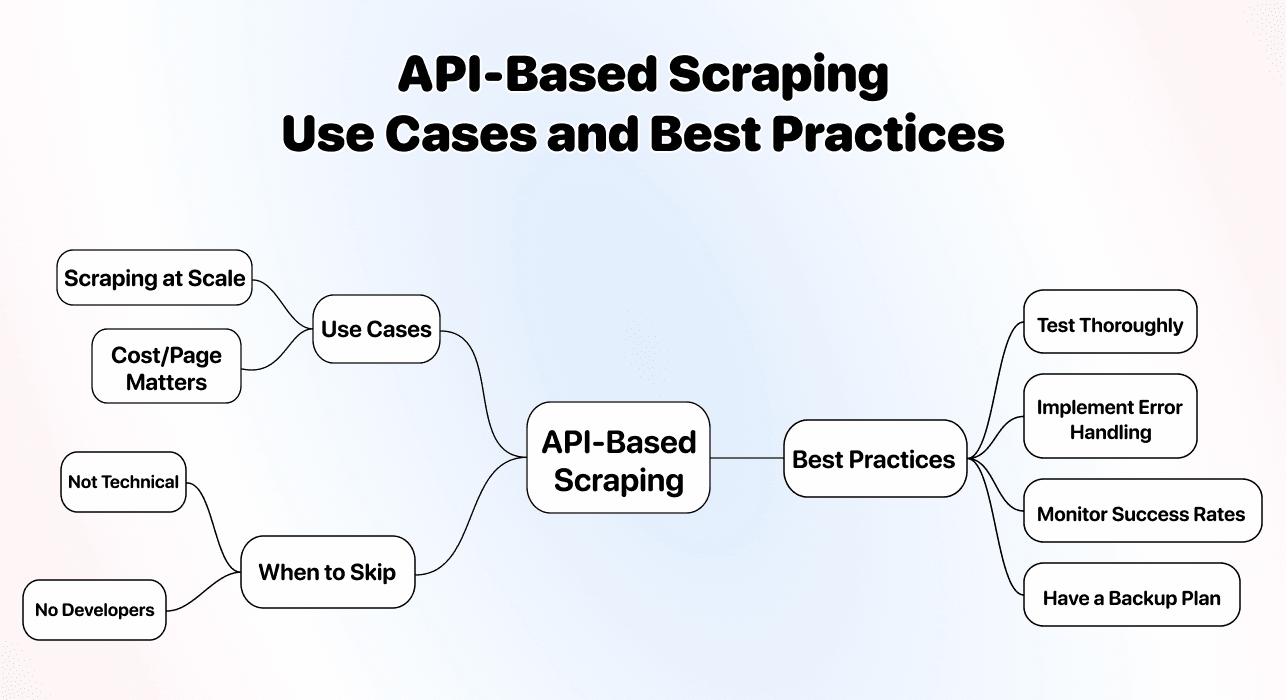

Use Cases and Best Practices

API-based scraping works best for technical teams doing high-volume, repetitive scraping.

Best use cases:

- Scraping at scale (thousands or millions of pages)

- Integrating scraping into applications

- Projects where cost per page matters more than ease of use

Best practices:

- Test thoroughly before committing to a provider

- Implement robust error handling for failed requests

- Monitor success rates to catch when scrapers break

- Have a backup plan if the provider shuts down or changes terms

When to skip API scraping: If you're not technical and don't have developers on your team, API scraping will frustrate you. The cost savings don't matter if you can't actually use the tool.

Best Tool to Use

The "best" API-based scraper depends on what you're trying to scrape, but here are solid options:

-

For general web scraping: ScrapingBee and Bright Data offer reliable API-based scraping for most websites. They handle proxies, browser rendering, and anti-bot measures automatically.

-

For specific platforms: Look for specialized APIs (LinkedIn scrapers, Amazon scrapers, etc.). They're optimized for those platforms and handle the specific challenges of each site.

What to look for:

- Clear documentation and examples

- Reliable uptime and support

- Transparent pricing without hidden fees

- Good success rates for your target websites

The reality: Even the best API-based scrapers require technical skills. If "making API calls" sounds complicated to you, choose AI scraping; it will give you more control & peace of mind

💡 API Scraping Is Cost-Effective But Technical

API-based scraping offers the best cost per page for high-volume projects, but you need technical skills to use it effectively. Don't choose it just because it's cheap if you can't actually implement it, and remember the saying: buy cheap, pay twice.

The Bottom Line: Which Method Should You Choose?

After comparing all three methods, here's how to pick the right one for your situation.

Choose AI Scraping If:

- You're not technical and want the easiest option

- Maintenance time is expensive for you

- Websites you're scraping change frequently

- You need flexibility to adjust what data you extract easily

Best for: Non-technical users, small teams, lead list building, market research, competitive intelligence, and projects where time and ease of use matter more than a few pennies per page.

Best tool: Datablist for a true no-code experience with natural language instructions.

Choose Click-and-Point Tools If:

- You're scraping stable websites that rarely change

- You're comfortable learning technical concepts

- You're okay with maintenance when sites update

- You prefer desktop applications over web-based tools

Best for: Teams with technical comfort, stable government or institutional websites, and projects where configuration time isn't the primary concern.

Best tool: Octoparse for mature features and extensive documentation.

Choose API-Based Scraping If:

- You're technical or have developers on your team

- You're scraping at high volume (thousands of pages daily)

- Cost per page is your primary concern

- You're integrating scraping into applications

Best for: Technical teams, high-volume projects, application integration, situations where development time is available, and cost per page is a priority.

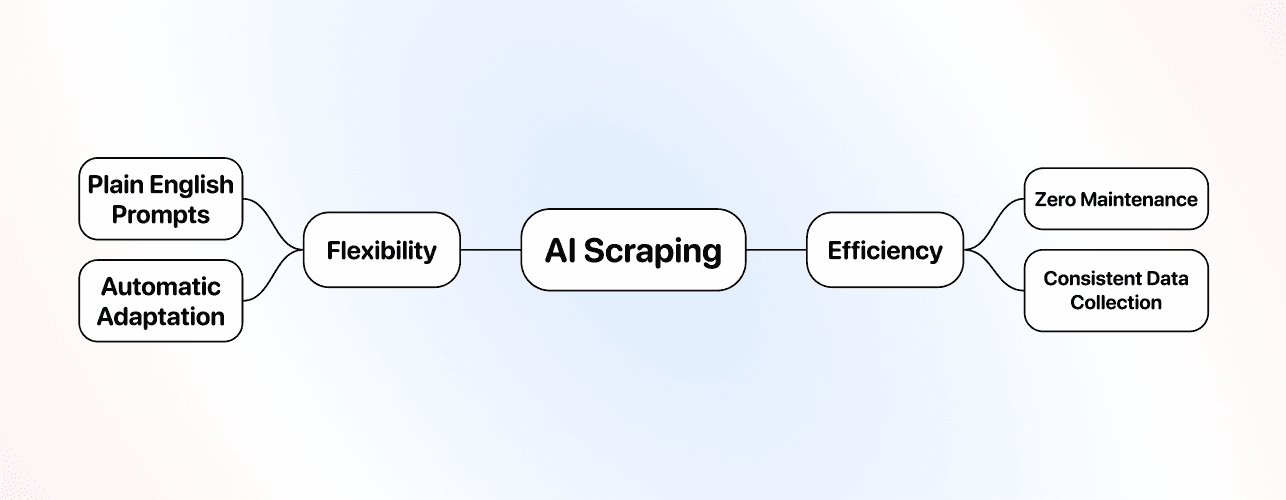

Best tools: ScrapingBee or Bright Data for general scraping, specialized APIs for specific platforms.

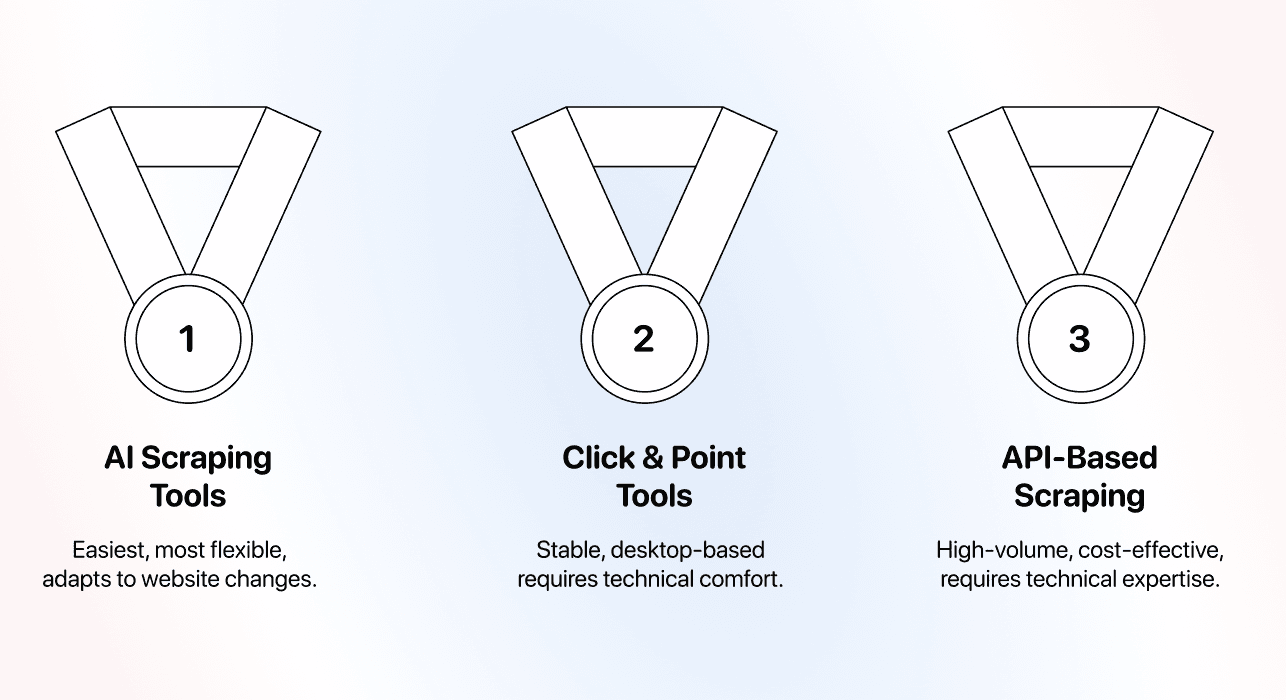

Our Recommendation for Most People

For 80% of users, AI scraping is the right choice. The ease of use, flexibility, and minimal maintenance make it worth the slightly higher cost per page.

The no-code scraping landscape has evolved. What used to require technical skills or hours of configuration now takes minutes with clear instructions.

Here are 3 simple reasons why AI scraping is the best method to start with:

- It’s the easiest method

- It’s the most flexible method

- It requires zero maintenance

And if you want to scale your volume past 10,000 per day, you can switch to API-based scrapers

Frequently Asked Questions

What is The Most Efficient No-Code Scraping Method?

Efficiency depends on what you're measuring. API-based scraping is most cost-efficient for high-volume projects if you're technical. AI scraping is most time-efficient for setup and maintenance if you're non-technical. For most users, AI scraping offers the best overall efficiency by eliminating technical barriers and maintenance work.

Is AI Scraping Better Than No-Code Scraping?

AI scraping is a type of no-code scraping, just the most advanced version. When people ask this question, they usually mean: "Is AI scraping better than click-and-point tools?" The answer is yes for most use cases. AI scraping adapts to website changes automatically, requires less technical knowledge, maintenance work, and costs less overall than click-and-point tools.

Is AI Scraping Expensive?

AI scraping costs more per page than API-based methods but less than the total cost of click-and-point tools. For scraping 1,000 directory listings, expect to spend 800-1,200 credits (exact cost varies by tool and page complexity). The value comes from zero maintenance and no technical knowledge required, which saves time and money for non-technical users.

What is AI No-Code Scraping?

AI no-code scraping, i.e., AI scraping, refers to using artificial intelligence to extract data from websites without writing code or configuring technical selectors. You describe what data you want in plain English, and the AI understands your intent and handles the technical details. It combines the accessibility of no-code tools with the intelligence to adapt to different website structures automatically.

Can I Use Multiple Scraping Methods Together?

Yes, and many teams do exactly this. Use AI scraping for exploratory work, new websites, and situations where flexibility matters. Once you identify high-volume, repetitive scraping tasks, consider switching those specific tasks to API-based methods for cost savings, but consider that you’ll be dealing with technical concepts and it will take time.